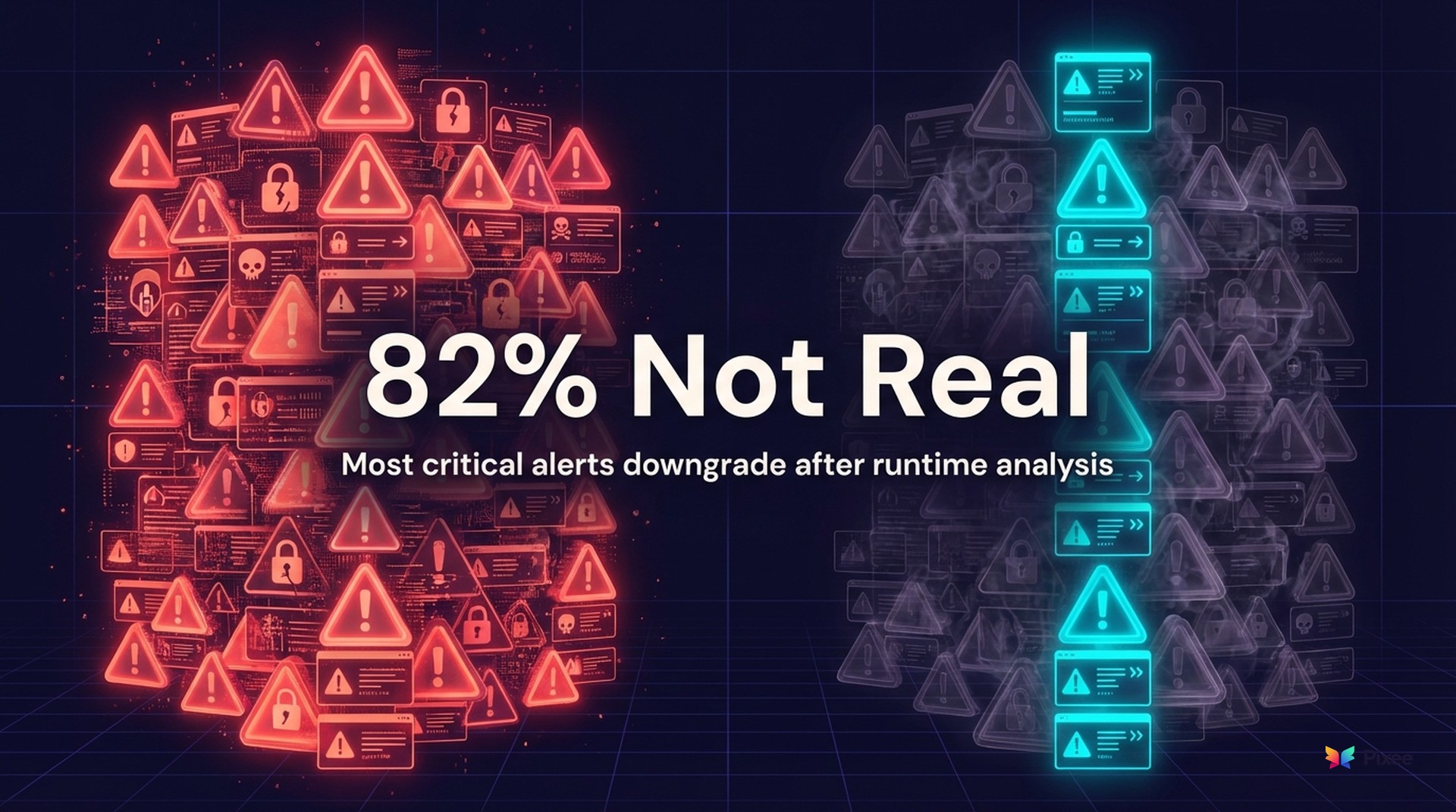

87% Run Exploitable Code in Production, and 98% of "Critical" Alerts Downgrade at Runtime

Your team triaged how many criticals last month? Fifty? A hundred? Datadog's 2026 State of DevSecOps report says 98% of critical .NET vulnerabilities weren't exploitable in production -- a finding that aligns with what we covered in this week's AppSec Weekly briefing.

Not "low priority." Not "acceptable risk." Literally unreachable by an attacker in the running environment. Your best engineers spent most of their critical-response hours on vulnerabilities that couldn't hurt anyone.

That .NET number is the extreme end, but the pattern holds across stacks: only 18% of vulnerabilities labeled "critical" stayed critical after runtime analysis. The other 82% were real vulnerabilities in theoretical scenarios that never match production reality.

Meanwhile, 87% of organizations run at least one exploitable vulnerability in production, affecting 40% of their services. Vulnerabilities that can hurt you are sitting in backlogs behind the ones that can't.

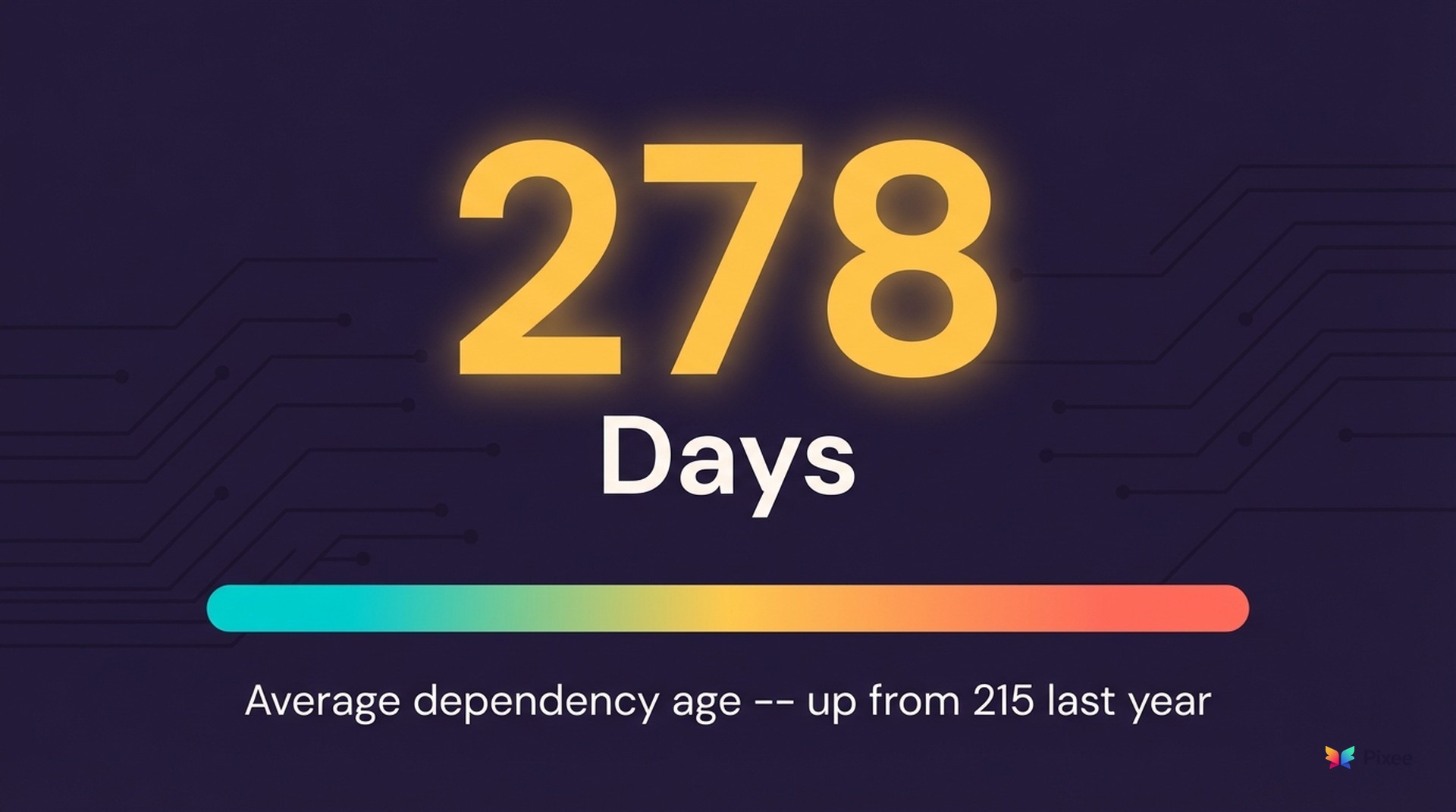

The Dependency Velocity Mismatch Eating Your Backlog

Dependencies average 278 days out-of-date, up from 215 days last year. Nearly nine months of accumulated security debt per component.

Java services lead vulnerability exposure at 59%, followed by .NET at 47%. And 42% of services rely on libraries no longer actively maintained -- a pattern we explored in depth in why 77% of your code coming from third-party dependencies is a ticking time bomb.

Modern development teams deploy multiple times per day, but security remediation operates on quarterly cycles. Dependencies sit unpatched for nine months while new features ship weekly. Known vulnerabilities pile up because teams can't prioritize when everything screams "critical."

Scanning isn't the bottleneck. Translation is: from "detected" to "matters in production" to "fixed." Teams know about these issues. They're drowning in alerts. With every sprint cycle, the gap between "known" and "resolved" widens because new deployments introduce fresh dependencies before old ones get patched.

Why CVSS Scoring Breaks Down in Production Environments

CVSS treats all environments as identical. A SQL injection receives the same 9.8 whether it's in a public-facing API processing raw user input or an internal service behind three authentication layers and a WAF.

Consider what runtime context reveals. A "critical" SQL injection might be:

Traditional scoring can't account for any of this. It assumes worst-case: unauthenticated access, direct user input, no defensive layers. That model made sense when applications were simpler. Today, it generates noise at industrial scale.

Runtime analysis inverts this. Instead of assuming maximum exploitability, it tests actual exploitability in your environment. Can an attacker reach this code? Do they have the required privileges? Are defensive measures in place? Only after answering those questions can you determine real risk.

This explains the 98% downgrade for .NET criticals. Those aren't false positives in the traditional sense. The code is vulnerable. But it isn't exploitable given the defensive posture of the running environment.

The Software Supply Chain Security Dimension

Prioritization problems extend beyond application code. Datadog found that 71% of organizations never pin GitHub Actions to commit hashes, exposing their entire CI/CD pipeline to supply chain attacks. Meanwhile, 1.6% of npm-using organizations deployed malicious dependencies this year -- a small percentage representing thousands of compromised deployments.

Security practices that worked at smaller scales collapse under modern velocity. Pinning Actions to commit hashes is a known best practice, but 71% skip it. Manual dependency review is security gospel, but 1.6% still deploy malicious packages. Knowledge doesn't close the gap. Velocity widens it.

When 40% of production services carry exploitable vulnerabilities and dependencies sit unpatched for nine months, it becomes a governance issue. Boards ask: "What's our exposure?" Most security teams' honest answer: "We know about thousands of vulnerabilities, but we can't tell you which ones matter."

The Vicious Cycle of Vulnerability Misprioritization

When most "critical" alerts don't represent real production risk, the resource drain compounds invisibly. The hidden cost of triage labor is staggering: security teams spend 80% of their time on triage rather than fixes, burning through engineering hours on theoretical threats while exploitable code ships to production. Engineers context-switch between feature work and vulnerability fixes that don't improve security posture. Developer trust erodes: after the third "drop everything" critical turns out unexploitable, engineers stop treating security tickets as urgent. You've trained your own team to ignore your alerts.

The opportunity cost extends beyond labor. While teams chase false priorities, strategic initiatives stall. Architecture reviews, threat modeling, security automation -- the work that actually reduces risk gets pushed to next quarter because the "critical" queue never empties.

This creates a feedback loop. Teams fall further behind on strategic work, which increases technical debt, which generates more alerts, which consumes more triage capacity. Backlogs grow faster than teams can clear them, regardless of hiring or tooling investments.

Every additional scanner makes this worse. More tools, more findings, more triage time, less capacity for strategic work that would shrink your attack surface. Teams that break this cycle don't do it by adding detection. They start by solving prioritization.

The 5-Minute Reachability Gut-Check

You don't need new tooling to start filtering signal from noise. For any critical that lands in your queue, run it through five questions:

Check your SAST tool's call graph or data flow analysis. If the vulnerable function is only called by internal batch processes or admin utilities, it's not internet-reachable. Many SAST tools already surface this in their findings detail; most teams skip past it.

Cross-reference against your deployment manifests or service mesh configuration. An RCE in an internal-only microservice is a different risk class than the same RCE in your public API gateway.

A SQL injection requiring admin authentication to reach is not the same risk as one on your login page. Your API gateway logs or auth middleware configuration will tell you this in under a minute.

Check your WAF configuration and any input sanitization upstream of the vulnerable function. If the attack vector is already blocked before it reaches vulnerable code, reduce severity accordingly.

Check your APM data. Dead code with a critical vulnerability is still dead code. If no production traffic has hit that path in a month, it can wait.

How to score: If the answer to question 1 or 5 is "no," deprioritize immediately. If both 3 and 4 are "yes," drop severity by two levels. This isn't perfect reachability analysis, but it's a five-minute filter that will cut your critical queue without buying anything or changing your workflow.

Run this against your next ten criticals. Track results: finding ID, answers to each question, original severity, adjusted severity. If the pattern matches Datadog's data (and it will), you have internal evidence for two things: a repeatable triage filter your team can apply immediately, and a business case for automated reachability analysis that does this at scale. For a more comprehensive framework on scaling this approach, see our triage automation playbook for cutting 2,000 alerts down to 50 actionable findings.

What Runtime Reachability Analysis Tells Us

The opportunity here isn't marginal. If 82% of criticals aren't exploitable in production, reclaiming even half that triage capacity unlocks strategic security work that actually shrinks your attack surface. Teams that layer reachability context on top of existing scanners shift from reactive backlog management to proactive risk reduction -- the same shift outlined in our four-step plan for making your security backlog a solvable problem. Start with the five-question gut-check on your next batch of criticals -- the results will show you exactly how much capacity is available to redirect.

Explore the Full Guide

This post is part of our learn more about triage automation resource center. Explore the full guide for frameworks, benchmarks, and implementation playbooks.

Related Reading

context-driven triage at machine speed

once you know what's exploitable, the next question is resolution