Why Your SAST Tool Cries Wolf — And What to Do About It

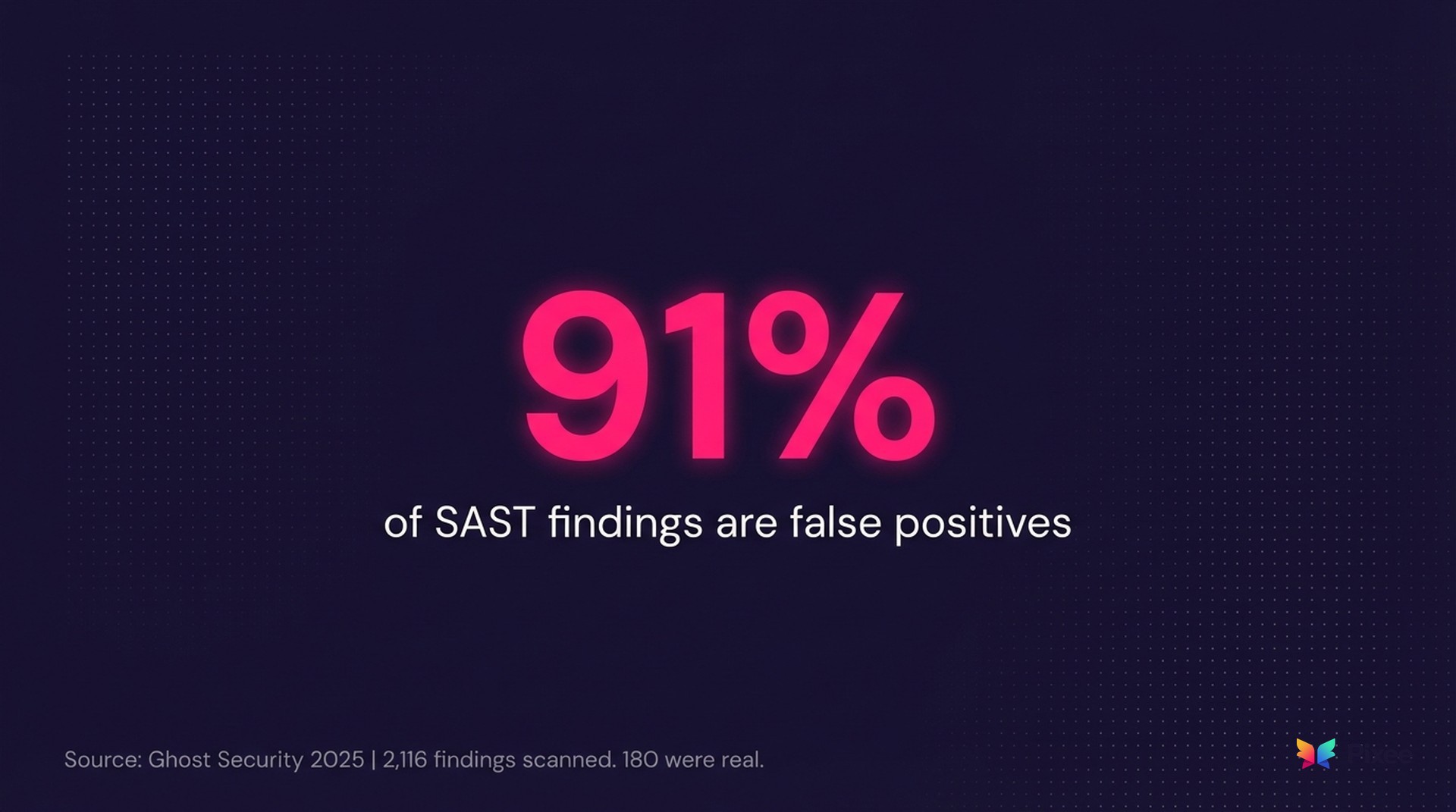

In 2025, Ghost Security scanned public GitHub repositories across Go, Python, and PHP using traditional SAST tools. Of 2,116 flagged vulnerabilities, 180 turned out to be real. That's a 91% false positive rate on open source code. Enterprise environments with custom sanitization and framework-aware configuration perform better — but not by as much as you'd hope.

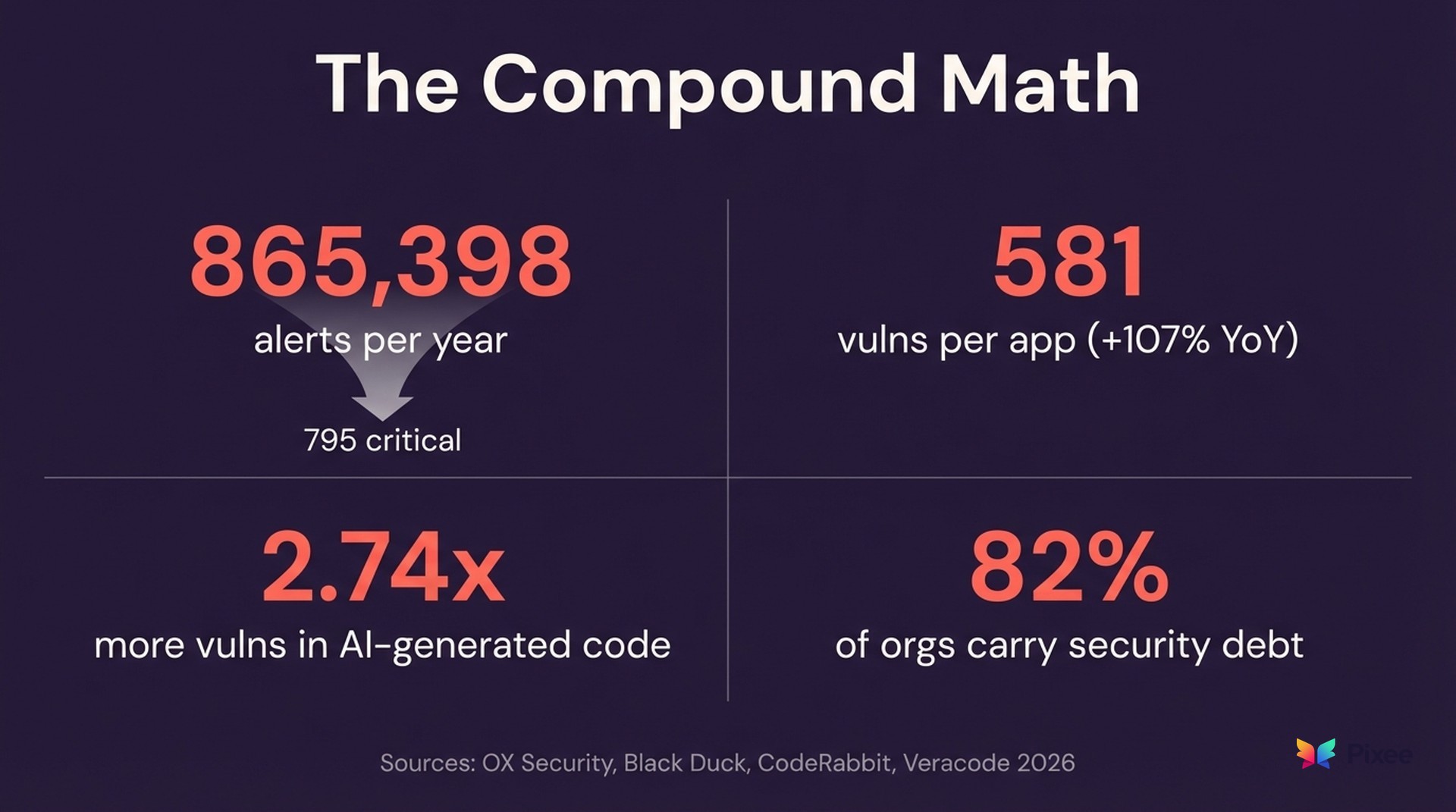

The OX Security 2026 Application Security Benchmark tells the enterprise story: 216 million findings across 250 organizations, and the average enterprise now faces 865,398 security alerts per year. After applying exploitability and reachability analysis, only 795 were critical — 0.092% of the total volume. Whether the false positive rate in your environment is 60% or 91%, the structural problem is the same: SAST flags patterns, not exploits.

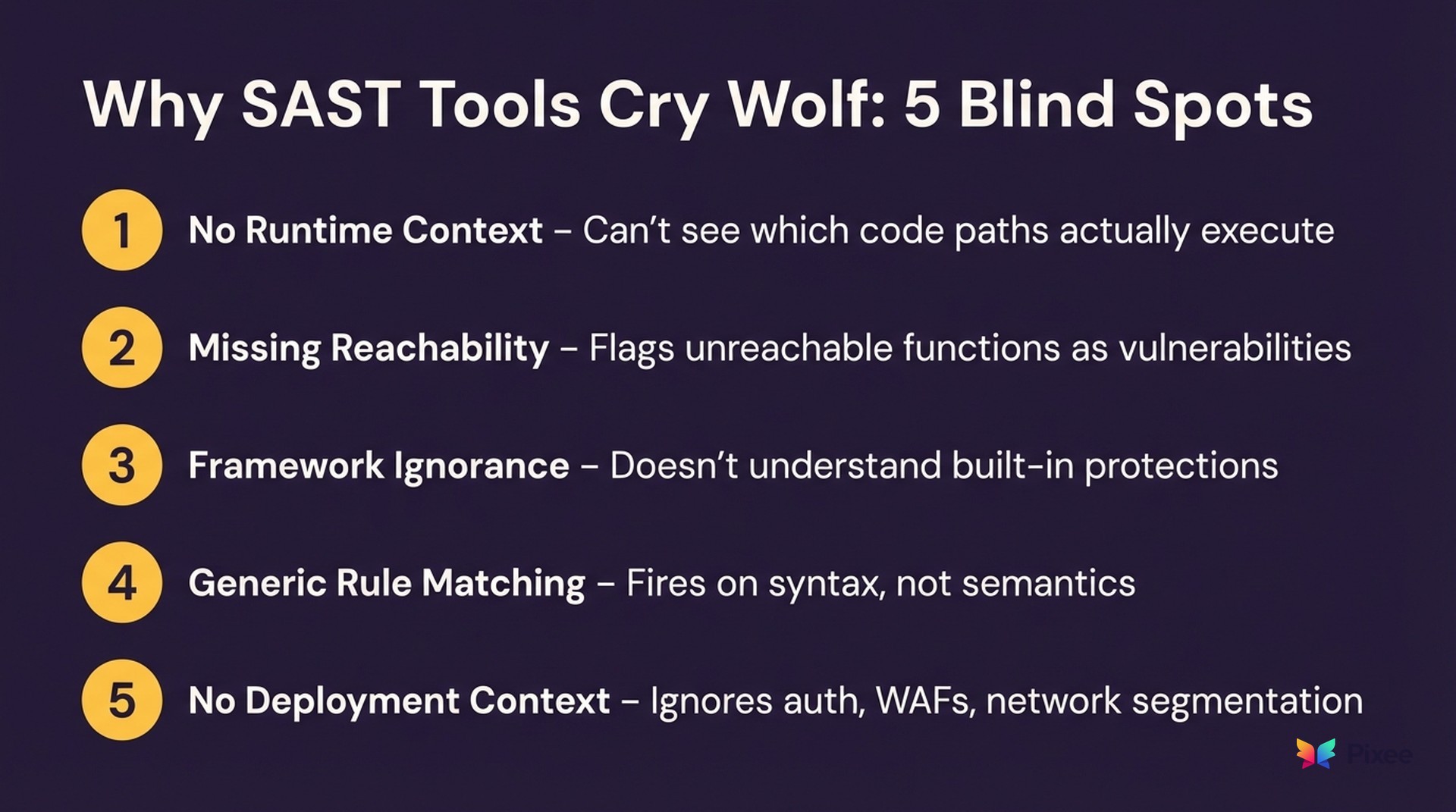

The problem isn't that SAST tools are broken. They're doing exactly what they were designed to do: flag every pattern that could be a vulnerability. The problem is that "could" and "is" are separated by an ocean of context that static analysis can't see.

And when 9 out of 10 alerts are wrong, teams stop looking. That's not alert fatigue. That's rational behavior.

What causes SAST false positives?

Static analysis tools examine source code without executing it. That constraint creates five systematic blind spots:

As CSO Online summarized in January 2026: "SAST flags theoretical flaws that never execute." Contrast Security's research makes the perimeter problem even clearer — WAF signals show less than 0.25% correlation to actual exploits. Static patterns are not runtime reality.

It's getting worse, not better

Every blind spot above compounds when code volume increases. And code volume is exploding.

The Black Duck 2026 OSSRA report found open source vulnerabilities per codebase doubled year-over-year, reaching 581 per application — a 107% increase. Across 950+ audited codebases, 87% contained at least one known vulnerability.

AI-generated code is accelerating the trend. CodeRabbit's 2026 analysis found AI-generated code contains 2.74x more vulnerabilities than human-written code. Snyk's research puts it at 48% of AI-generated code containing security flaws. Yet only 24% of organizations perform comprehensive security, IP, license, and quality evaluations on AI-generated code, according to Black Duck.

Run the math: more code, generated faster, with nearly triple the vulnerability density, scanned by tools that flag nine false alarms for every real finding. The noise isn't growing linearly. It's compounding.

Veracode's 2026 State of Software Security report confirms the downstream effect: 82% of organizations now harbor security debt — an 11% increase from the prior year. High-risk vulnerabilities spiked 36% year-over-year. The report's core finding is blunt: "The backlog of unresolved vulnerabilities is growing faster than teams can eliminate it."

When false positives kill trust

False positives aren't just an efficiency problem. They're a trust problem.

When we talk to security teams, the pattern is consistent. A Head of AppSec at one of the world's largest music streamers described the breaking point: "We weren't very impressed with the results of the SAST and the volume of either false positives or things that didn't have reachability analysis to see if the vulnerability can actually be triggered." Their team shifted evaluation criteria entirely — away from detection coverage and toward signal quality.

At a global retailer in the automotive space, a security engineer described what happens after trust erodes: "Those go back to us. We are not triaging those right now as much as we could be... Generally, it gets ignored." The backlog kept growing. The findings kept arriving. Nobody acted on them.

This is the poisoned well syndrome. Once developers learn that most security alerts are noise, they treat all alerts as noise — including the ones that matter. The SAST tool's credibility drops below the threshold where anyone acts on its output.

The numbers confirm the pattern. Security engineers spend 50-80% of their time on manual triage. Veracode found that only 11.3% of discovered flaws pose real-world danger. The rest is triage theater — teams performing the labor of security without the outcomes. And for teams with SOC 2 audits or regulatory deadlines, a 100K-finding backlog isn't just a productivity drain — it's a compliance exposure that lands on the CISO's desk.

A security engineer at Ciena surfaced the core credibility challenge: "How are you coming up with the confidence rate? Because you don't know how the application uses it." When the tool can't explain why a finding matters in context, the finding doesn't matter to the people who need to act on it.

What actually reduces SAST false positives

There are three tiers of response, each building on the last.

Tier 1: Tune what you have

Most mature AppSec teams have already done some version of this. If you have, and your false positive rate is still above 30%, that confirms the structural limitation — tuning pattern-matching rules has a ceiling. Skip to Tier 2.

Before adding new tools, extract more signal from existing ones.

This is the highest-impact action for most teams. Benchmarking data from Mend.io shows well-tuned SAST deployments can operate at 10-20% false positive rates compared to 60-90% out of the box.

Tier 2: Add runtime verification

Static analysis guesses. Runtime observation confirms.

The combination of static + runtime testing is the most effective technique for false positive reduction documented in the current literature.

Tier 3: Exploitability analysis

The industry is converging on exploitability analysis — closing the gap between "flagged" and "exploitable."

Full disclosure: this is one of the places where Pixee fits. We built an exploitability analysis layer that sits on top of your existing SAST and SCA scanners, applies reachability and security-context evaluation, and surfaces only the findings that are actually exploitable in your environment.

What "good" actually looks like

If you're measuring your program, here's the calibration:

But false positive rate alone is a trailing indicator. The metric that matters most is developer response rate — what percentage of security findings result in an actual fix? If developers are closing findings as "won't fix" or "not applicable" at rates above 50%, your signal-to-noise ratio has already collapsed regardless of what the FP rate metric says.

What the shift from detection to exploitability actually looks like

The SAST false positive problem isn't going away on its own. Code volume is increasing, AI-generated code is multiplying the vulnerability surface, and rule-based pattern matching hasn't fundamentally changed in a decade.

The path forward isn't better rules. It's better context. Exploitability analysis, reachability verification, and security-context evaluation collapse the gap between "theoretically vulnerable" and "actually exploitable."

That's the shift from finding more to finding what matters — and the difference between a security team that triages 865,000 alerts and one that fixes the 795 that count.

Related reading:

Pixee applies exploitability and reachability analysis across 10+ scanner integrations. In POC environments across 50+ companies, teams typically see a 90-95% reduction in actionable alert volume. Run it on your codebase — POC takes less than 2 hours to set up →