85% of Automated Security Fixes Get Dismissed. The Fix Isn't More Fixes.

Your security toolchain is generating more pull requests than ever. Dependabot opens PRs. Snyk opens PRs. Renovate opens PRs. Your shiny new AI coding assistant opens PRs. And your developers? They're ignoring most of them.

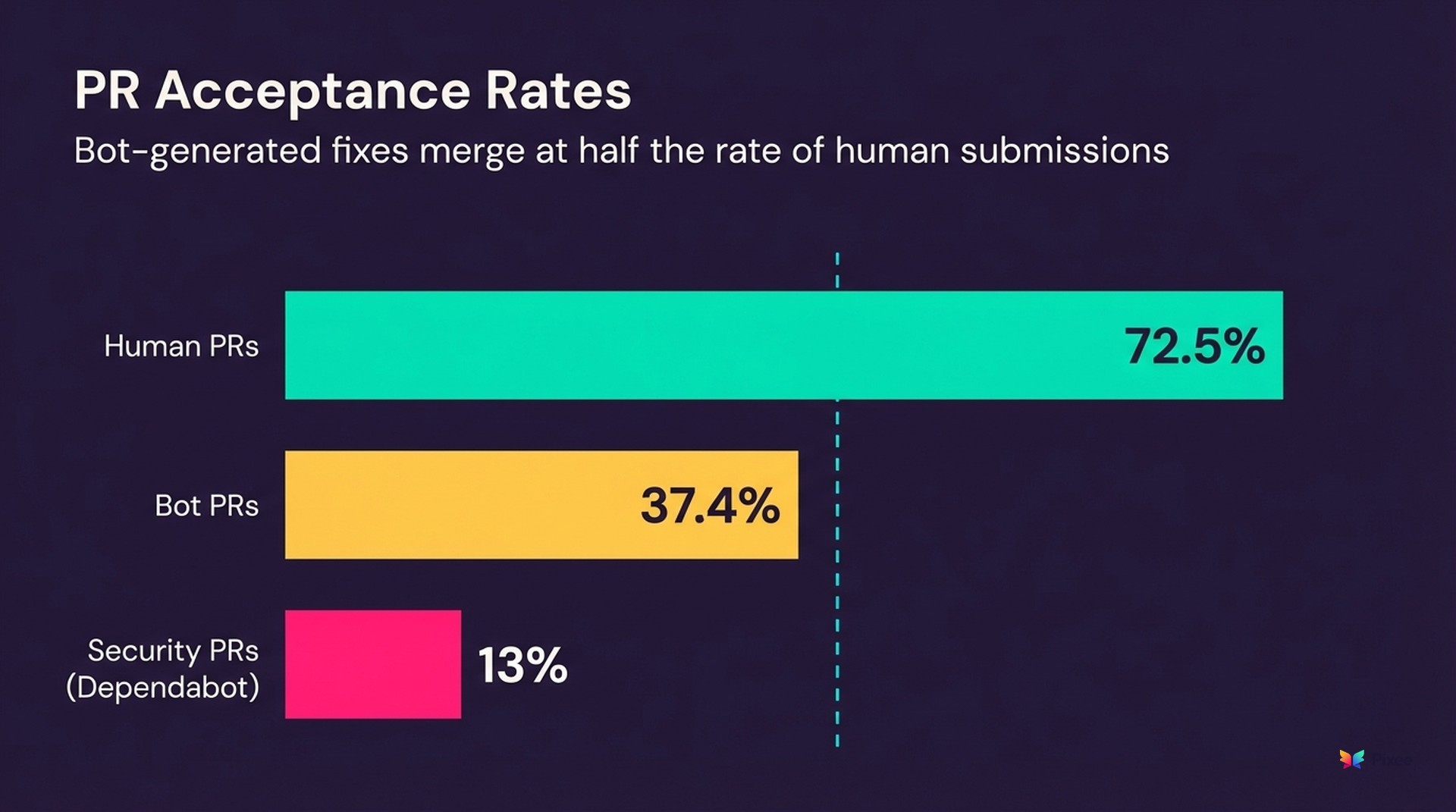

Bot-generated pull requests get accepted at 37.38%, compared to 72.53% for human submissions. That's not a rounding error. That's a nearly 2x gap in acceptance rates — and it gets worse for security-specific fixes. In JavaScript open-source projects, Dependabot security PRs hit just 13% adoption.

An anonymous engineering leader put it best: "We didn't get faster. We just moved the traffic jam."

The security PR merge rate problem is hiding in plain sight. The security industry has spent a decade building better detection. Better scanners. Better alerts. But nobody stopped to ask: what happens when you generate a fix that developers won't merge? You get an expensive notification system that creates work without reducing risk.

Why Do Developers Reject Automated Security Fixes?

Here's what the data actually shows: 86% of developers don't view security as a top priority when writing code. Before you read that as apathy, consider what it really means. Developers aren't ignoring security because they don't care. They're making a rational resource allocation decision based on every experience they've had with security tooling.

When 85% of issues flagged by AI code review get dismissed — per Arnica's analysis of their own code review platform data — that tells you something about signal quality, not developer negligence. When 69% of developers aren't even aware that vulnerable dependencies exist in their projects, that tells you the tooling failed at its most basic job: communicating risk in a way that registers.

The core problem is what Arnica's research calls the "trust paradox." Developers maintain low merge rates for automated security fixes because generic fixes consistently fail to account for three things:

This isn't theoretical. Engineering leaders describe it plainly:

"Developers voted with their feet a long time ago. You can go to the marketplace and for all these IDEs, developers don't install security tools." — VP of Engineering, GuidePoint Security

"Generally, it gets ignored. Those go back to us. We are not triaging those right now as much as we could be." — Security Engineer, AutoZone

Developers don't distrust automation. They distrust automation that doesn't understand their codebase. There's a meaningful difference — and this developer security friction explains why 81% of organizations ship vulnerable code. The capacity to fix exists, but the fixes being offered aren't worth the review time.

The AI-Induced Bottleneck: More Code, Slower Reviews

If you thought AI code generation would help, the early data is sobering.

GitHub's own research with Accenture found that developers accepted just 30% of Copilot's suggestions in real-world enterprise deployment. That's a 70% rejection rate from the most widely adopted AI coding tool on the market.

But acceptance rate is only half the story. The downstream effects on code review are severe:

AI accelerated code creation and moved the bottleneck downstream to code review. Half of all PRs already sit idle for more than 50% of their lifespan — that was true before AI coding assistants flooded the queue with more changes to review.

Now add security PRs to that queue. They're competing for review bandwidth against feature work, AI-generated code, dependency updates, and refactoring PRs. Security PRs that lack context, lack explanations, and lack proof of safety get deprioritized or abandoned.

GitHub's March 2026 launch of Copilot Coding Agent for Jira makes this explicit: the tool generates draft PRs directly from Jira tickets, further accelerating PR creation while code review remains the acknowledged bottleneck.

The result is what one team described as the "LGTM reflex" — review fatigue that leads to either rubber-stamping (dangerous for security changes) or abandoning the review queue entirely (leaving security PRs to rot).

How Current Tools Deliver Fixes — and Where They Fall Short

Not all fix delivery mechanisms fail equally. Here's what the competitive landscape looks like:

Dependabot and Renovate automate dependency PRs at scale. Dependabot achieves roughly 54% merge rate, but with significant noise — PRs frequently get superseded by newer Dependabot PRs before anyone reviews them. Renovate's configurability (grouping, scheduling, merge confidence scoring based on millions of CI outcomes) partially addresses this, but requires serious investment in configuration.

The strongest counter-example comes from Flink, where an engineering team built "DuneBot" on top of Renovate and merged over 12,000 PRs across 700+ repositories by eliminating the CODEOWNERS bottleneck entirely. That proves the merge rate problem is solvable for dependency updates — but it took custom automation on top of automation to get there, and it doesn't extend to SAST, DAST, or code-level vulnerability fixes where the fix itself requires codebase understanding.

Snyk generates fix PRs with compatibility scores, giving developers data to assess risk. But Snyk sometimes creates custom patches when direct upgrades would break APIs — a reasonable tradeoff that nonetheless introduces maintainability concerns.

SonarQube and Checkmarx take a fundamentally different approach: dashboard-based findings that require developers to context-switch from their IDE to a separate interface for manual remediation. No PR, no fix — just a finding and a recommendation. The gap between "identified" and "fixed" in these workflows is where vulnerabilities go to die.

AI coding assistants (Copilot, Cursor, etc.) offer inline suggestions but with no security-specific context. Developers reject most of these suggestions for the same reasons they reject bot PRs — no codebase awareness, no risk explanation, no reason to trust.

Every tool on this list shares one failure mode: generating fixes without understanding the codebase context that determines whether those fixes are safe, correct, and worth merging.

How Do You Improve Security PR Merge Rates?

Generating more fixes won't help. Generating fixes worth reviewing will.

Triage before you fix. When 71-88% of scanner findings are false positives, opening PRs for all of them actively damages developer trust. The single highest-leverage action is reducing the volume of fix PRs to only those addressing real, exploitable vulnerabilities. Fewer, higher-signal PRs get more attention, faster reviews, and higher merge rates. This is the unsexy foundation that makes everything else work.

Match codebase context. A fix that respects your dependency versions, your test patterns, your code style, and your project structure looks like something a colleague wrote — not something a bot generated. Context-aware fixes reduce review friction because developers can evaluate correctness against patterns they already understand.

Provide merge confidence signals. Renovate's approach of scoring fix safety based on aggregated CI outcomes from millions of builds points in the right direction. Developers need quantitative evidence that a change won't break anything, not just a bot's assertion that a vulnerability exists.

Respect review ergonomics. Small, focused PRs with clear explanations outperform bulk dependency updates. Every fix PR should explain what vulnerability it addresses, why the fix is correct, and what changed — in language developers can evaluate without opening a separate scanner dashboard.

Build trust incrementally. The first fix a developer reviews from any automated system determines whether they'll review the second one. Consistent quality over time creates a positive feedback loop. Inconsistent quality — even one bad fix in a batch of ten — reinforces the "ignore all bot PRs" heuristic.

Where friction is appropriate. Not all security PRs should merge fast. A dependency bump that touches authentication middleware deserves full review with security team input. A provably safe patch to a serialization vulnerability with passing CI can be auto-merged with a confidence gate. The goal isn't eliminating friction — it's calibrating friction to actual risk. Critical and exploitable findings get full scrutiny. Low-risk, high-confidence fixes get a fast lane.

"Scheduling the developers' time to actually fix or remediate these findings is the real challenge." — Security Engineer, Checkmarx customer

The challenge isn't making security work disappear. It's making it proportional to actual risk.

Organizational dynamics — training gaps, priority misalignment, security-as-separate-function — compound the technical problem. But those are decade-old conversations. The leverage point is the fix itself.

Three Things You Can Do This Week

If you're a VP of Engineering watching security PRs stack up in your team's review queue, start here:

The merge rate problem isn't a developer problem. It's a signal quality problem. Fix the signal, and developers will fix the code.

Related reading:

Related Analysis

• managing Pixeebot activity with the dashboard — How the dashboard addresses PR management

Related Reading

managing Pixeebot activity with the dashboard

design-first security approach