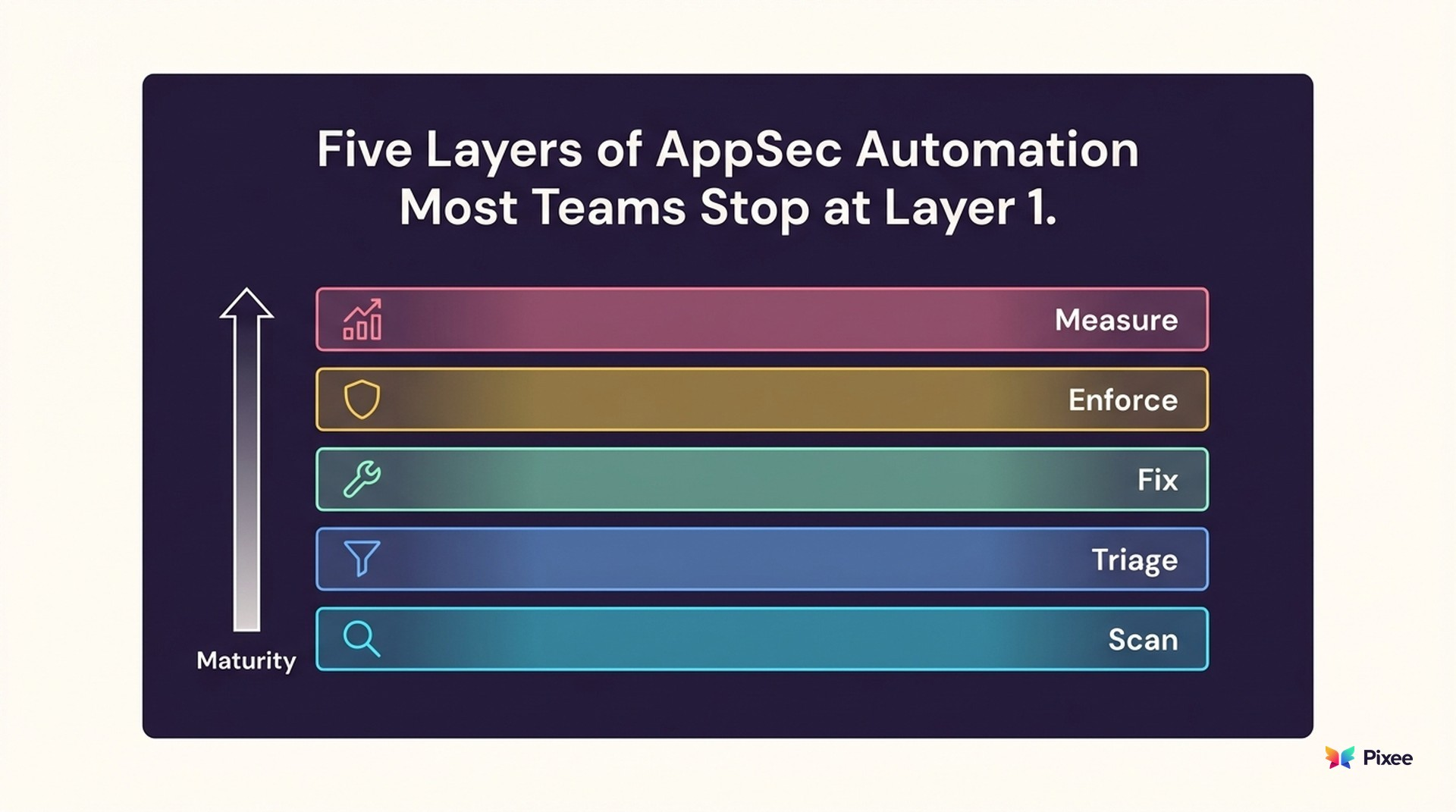

Application Security Automation: The Five Layers Most Teams Are Missing

Most teams automated Layer 1 and declared victory. Four layers remain.

Application security automation is not a single capability. It is a stack. And most organizations have automated the easiest layer (scanning), skipped the hardest layers (triage and remediation), and wonder why their vulnerability backlogs keep growing despite having "automated security."

Veracode's 2026 State of Software Security report found that 82% of organizations now carry security debt — known vulnerabilities unresolved for over a year — up from 74% in 2025. Sixty percent of that debt is critical: flaws severe enough to cause catastrophic damage if exploited. Mean time to remediation sits at a 243-day half-life, and that number has risen 327% over the past 15 years of Veracode's reporting.

These numbers exist at organizations that have scanning automation in place. The scanners are automated. The resolution is not. And the math keeps getting worse: 48,000+ CVEs were published in 2025 alone, a 67% increase from 2023 (Checkmarx Future of AppSec 2026). ActiveState's 2026 vulnerability management report concluded that disclosure velocity permanently outpaced remediation capacity in early 2025.

Meanwhile, the industry ratio holds at roughly 1 AppSec engineer per 100 developers (PentesterLab, GitGuardian). The global cybersecurity talent gap has neared 4 million professionals (ISC2). You cannot hire your way out of this.

This guide maps the five layers of application security automation, what each layer does, where most teams stall, and what changes when you automate the full stack.

Layer 1: Scanning Automation (Table Stakes)

Automatically scans code, dependencies, containers, and infrastructure for vulnerabilities. Most teams have this implemented — it is the baseline.

Tools like Snyk, Checkmarx, Veracode, SonarQube, Semgrep, and GitHub CodeQL run automatically in CI/CD pipelines, scanning every commit, pull request, and deployment. Checkmarx's 2026 survey found 77% of organizations have 100+ in-house developers building externally facing apps. At that scale, scanning automation is not optional — it is table stakes.

But scanning alone creates a fragmentation problem. Security pipelines combine SAST, SCA, IaC scanning, and DAST, each generating its own findings in its own format. Gartner's 2025 Innovation Insight on Application Security Posture Management (ASPM) describes the result: "scattered signals across the SDLC" requiring centralized correlation to "cut alert fatigue while connecting code issues to runtime impact." Without that correlation layer, teams face duplicate findings, inconsistent risk scoring, and gaps in visibility.

A scanner that generates 2,000 findings per sprint has automated the detection of problems. It has not automated the resolution of problems.

How you know you're here: Findings appear automatically in your security dashboard without manual trigger. Nearly every organization above 50 developers has this.

Layer 2: Triage Automation (Where Most Teams Stall)

Automatically determines which scanner findings are actually exploitable in your specific environment. Most teams handle this manually — it is the first major gap.

Of the thousands of findings that scanning automation produces, the majority are false positives. A widely cited Ponemon Institute study (2015) found organizations received approximately 17,000 malware alerts per week, with only 19% deemed reliable — costing enterprises $1.3 million per year in wasted investigation time. A decade later, the problem has not improved. Gartner's 2025 research found that 40% of organizations have "orphaned security alerts" — findings that no team owned or acted upon. The tools got faster. The false positive rate stayed.

This manual triage process consumes 50-80% of AppSec team capacity on filtering work that produces no security improvement. But false positives are only half the problem. Research from The Hacker News' 2026 State of Pentesting report found that "nearly half of remediation time in application security is wasted figuring out who owns a problem and providing the necessary context." Even after a finding survives triage, routing it to someone who can act on it remains a separate unsolved bottleneck.

What automated triage looks like in practice:

Codebase-aware exploitability analysis verifies scanner findings:

- Reachability analysis maps whether vulnerable code is callable from application entry points

- Security control detection identifies existing protections that neutralize theoretical vulnerabilities

- Dependency invocation verification confirms whether vulnerable functions are actually called

- Ownership routing maps findings to code owners, eliminating the "who owns this?" delay

The result: a 95% false positive reduction — measured by comparing scanner-reported findings against exploitability-confirmed findings using reachability and control analysis (Pixee Platform Data, 2025). Your team reviews 100 confirmed vulnerabilities instead of 2,000 raw alerts.

Why most teams stall here: Triage automation requires deep code analysis capabilities that most scanner vendors do not provide. Scanners are optimized for detection breadth, not exploitability precision. This is a different technical problem requiring different engineering. Carnegie Mellon's Software Engineering Institute identified this integration challenge directly: "As the number of security tools grows and DevSecOps processes become more complex, many companies realize the hardest challenge is to make sense of all of the test results within a short period of time. For some companies, this task of bringing together the results from all of these tools is a full-time job."

How you know you're here: False positives are eliminated before reaching your security team, and triage decisions include documented rationale (reachability paths, control inventory).

Layer 3: Remediation Automation (The Hardest Layer)

Automatically generates production-ready code fixes for confirmed vulnerabilities. Most teams handle this entirely by hand — it is the critical gap.

The industry baselines tell the story. Veracode's 2026 report measures a fix half-life of 243 days across all scan types. Leading organizations fix half of flaws in 5 weeks; lagging organizations take over a year. Same scan data, same vulnerability types — the difference is entirely in remediation process maturity. And enterprises remediate only about 16% of vulnerabilities per month on average (Cyber Strategy Institute 2026).

After triage identifies what to fix, someone has to write the fix. In most organizations, this means creating a Jira ticket, assigning it to a developer, waiting for the developer to context-switch from feature work, research the vulnerability, write a fix, test it, and submit a pull request. The result: months of elapsed time per finding.

Here is where a less obvious problem surfaces. A 2025 Cloud Security Alliance study found that 68% of developers could not confidently remediate OWASP Top 10 vulnerabilities without external guidance. Remediation automation is not just about speed — it fills a security knowledge gap that hiring alone cannot close. When 1 in 3 organizations report that 60%+ of their code is now AI-written (Checkmarx 2026), and the developers writing prompts for that code lack security remediation skills, the gap compounds.

What automated remediation looks like in practice:

- Convention-matching fix generation analyzes your existing code to produce fixes using your validation libraries, error handling patterns, and architectural approach

- Breaking change detection validates every fix against automated compatibility analysis with 80-90% confidence scoring before a developer sees it

- Root-level SCA resolution resolves dependency vulnerabilities at the root of the tree rather than chasing transitive chains

Across 100,000+ enterprise PRs, Pixee achieves a 76% merge rate — defined as PRs merged without modification within 72 hours of opening, measured across 100,000+ PRs from enterprise deployments (Pixee Platform Data, 2025). Three out of four fixes get merged on first developer review — against an industry baseline where most organizations take months to produce a manual fix at all.

Why this layer is hardest: Generating fixes that developers actually merge requires understanding of code conventions, dependency relationships, framework idioms, and breaking change risk. Merge rates vary significantly by tool category. Purpose-built remediation tools that analyze code conventions and detect breaking changes before PR creation tend to outperform general-purpose AI code suggestions, though independent benchmarks are still emerging. Pixee invests in convention analysis and breaking change detection to close the quality gap.

How you know you're here: Confirmed vulnerabilities generate pull requests automatically and your merge rate on automated fixes exceeds 50%.

Layer 4: Policy Automation

Enforces security policies across repositories, teams, and the organization without manual policy checks. Most teams have partial coverage — merge request gates exist, but broader policy enforcement remains manual.

This layer is thinner than it should be at most organizations. Only 18% of organizations have formal governance policies for AI-generated code (Checkmarx 2026), even as AI-written code becomes the majority of new code entering production. BSIMM16's analysis of 111 organizations found that leading programs are "folding AI into existing software security initiatives — applying familiar controls such as secure design review, policy enforcement, and risk assessment to AI-enabled development." Most organizations have not started.

Policy automation codifies security decisions into enforceable rules:

- Merge gates block PRs that introduce new critical or high-severity vulnerabilities

- SLA enforcement automatically escalates findings that exceed remediation timeframes

- Scanner requirements ensure all repositories run required scanners before deployment

- AI code governance applies security review requirements to AI-generated code at the same rigor as human-written code

- Exception management provides self-service exception requests with approval workflows and expiration dates

Tools like Open Policy Agent (OPA), GitHub's native branch protection rules, and GitLab's security policy framework provide the enforcement mechanism. The harder challenge is organizational: getting engineering leadership to agree on which policies are blocking vs. advisory, and building the political capital to enforce them.

Without policy automation, security decisions depend on institutional memory and good intentions. Both degrade under pressure.

How you know you're here: Security policies are enforced programmatically (not via email reminders), AI-generated code is subject to explicit governance rules, and exceptions are tracked with expiration dates.

Layer 5: Measurement Automation

Tracks security program effectiveness with metrics that reflect outcomes, not activity. Most teams have minimal coverage here — dashboard metrics exist but rarely measure what matters.

Forrester's 2026 analysis found that 55% of companies could not quantify the impact of their shift-left efforts due to poor metric selection. Most security measurement focuses on activity metrics: number of scans run, findings detected, tickets created. These metrics demonstrate effort, not effectiveness. A program that scans everything and fixes nothing scores well on activity metrics while risk grows.

ISC2's Picklu Paul captures the problem: "Severity without context can distort focus — if everything is urgent, nothing truly is." When 82% of organizations carry security debt (Veracode 2026), CISOs need metrics that measure reduction in exploitable risk, not cumulative vulnerability counts.

Measurement automation tracks outcome metrics:

- Mean time to remediation (actual, per-finding, not estimated)

- Security debt trajectory (net change in unresolved findings aged 1+ year)

- Backlog velocity (net change in open findings per period)

- Fix rate (percentage of confirmed vulnerabilities resolved within SLA)

- Merge rate (developer acceptance of automated fixes)

- False positive rate (percentage of findings that turn out to be non-exploitable after triage)

How you know you're here: Your security dashboards show MTTR trending, security debt trajectory, and fix rate alongside detection metrics — and you can answer the question "is our exploitable risk going up or down?"

The Maturity Model: Where You Are vs Where You Should Be

| Level | Layers Automated | Typical Result |

|---|---|---|

| Level 1: Detection | Scanning only | Findings documented. Backlog grows. MTTR measured in months. |

| Level 2: Prioritized Detection | Scanning + basic severity filtering | Fewer alerts, but false positives still consume 50-80% of triage time. |

| Level 3: Verified Detection | Scanning + triage automation | 95% false positive reduction. Team focuses on real findings. MTTR starts improving. |

| Level 4: Automated Resolution | Scanning + triage + remediation | Confirmed findings generate merged fixes. MTTR drops to days. Backlog shrinks. |

| Level 5: Full Stack | All 5 layers | Policies enforced. SLAs met. Metrics prove effectiveness. Security scales with code volume. |

This five-layer model simplifies established industry frameworks — including OWASP SAMM (5 business functions, 15 practices), OWASP DSOMM (4 maturity levels), BSIMM (111 organizations benchmarked in its 16th edition), and the AWS Security Maturity Model — into the five automation decisions that most directly determine whether a program produces outcomes or just outputs.

In practice, most organizations operate at different maturity levels simultaneously. Your crown-jewel applications may be at Level 4 while internal tools remain at Level 1. This is normal and often the right allocation of limited security resources. The model describes a direction for each application tier, not a single organizational score.

Most organizations are at Level 1 or 2. They have automated what is easy (scanning) and left what is hard (triage and remediation) to manual processes.

AI-generated code compounds this pressure. CodeRabbit's 2026 analysis of 1M+ pull requests found 2.74x more vulnerabilities in AI-assisted repos compared to the same repositories' pre-AI baseline over 12 months. Snyk's controlled study found 48% of AI-generated code snippets contained at least one security issue. Manual triage and remediation cannot keep pace when code volume accelerates faster than team headcount.

The Human Layer: Why Automation Without People Fails

BSIMM16 documents a shift in how leading organizations approach security training: from "periodic, classroom-style instruction to continuous, role-specific enablement." Point-of-need training delivered when a developer encounters a vulnerability in their own code outperforms annual compliance workshops by any measure. Automated remediation PRs with security context serve this function implicitly: every merged fix teaches the developer who reviews it.

An academic systematic review of DevSecOps adoption (ScienceDirect) identified 21 challenges across four themes and found that "the lack of security skills and knowledge of developers" remains the primary barrier. Automation encodes security expertise into the toolchain, but it works best when paired with security champions who provide judgment, context, and escalation paths that no tool can replicate.

The five layers are a force multiplier for your security team, not a replacement for it.

Common Automation Mistakes

Experienced practitioners have seen automation introductions go wrong. Three patterns show up repeatedly in the research:

Automating detection without automating resolution. Veracode's 2026 report calls this the core crisis: development velocity outstrips remediation capacity, and the backlog grows faster than teams can eliminate it. Adding more scanners to a team that already cannot process its findings is not automation — it is automated pile-on.

Introducing tools that increase developer friction. Carnegie Mellon's SEI research found that when security tools are introduced without addressing false positives, development teams disengage from DevSecOps processes entirely. DevOps.com reaches the same conclusion: "When security steps into the picture, the practices lose speed since most of the security practices need human input." If automated security makes developers slower without making them more secure, they will route around it.

Measuring activity instead of outcomes. When 55% of companies cannot quantify the impact of their shift-left efforts (Forrester 2026), the measurement layer has failed. Scan counts and ticket volumes create the appearance of progress. MTTR, fix rate, and security debt trajectory measure actual risk reduction.

Getting Started: Match Automation to Your Bottleneck

If your team spends most of their time triaging: Start with Layer 2 (triage automation). Eliminate the false positive burden before investing in remediation.

If your team knows what to fix but cannot produce fixes fast enough: Start with Layer 3 (remediation automation) for your already-triaged backlog.

If both are bottlenecks: Start with triage. It deploys faster, immediately reduces the remediation queue, and builds organizational confidence in automation before introducing automated fixes.

If you have scanning but no triage or remediation: You are at Level 1. The gap between Level 1 and Level 4 is where month-long remediation cycles live. Closing that gap is the highest-ROI investment in your security program.

Most teams begin with a single scanner integration and expand coverage iteratively — the five-layer model is a direction, not a day-one requirement.

See where your automation stack stands -->

Related Reading: