SOC 2 Evidence Collection: How Automated Remediation Creates Audit-Ready Proof

Your scans prove you looked. They do not prove you fixed anything.

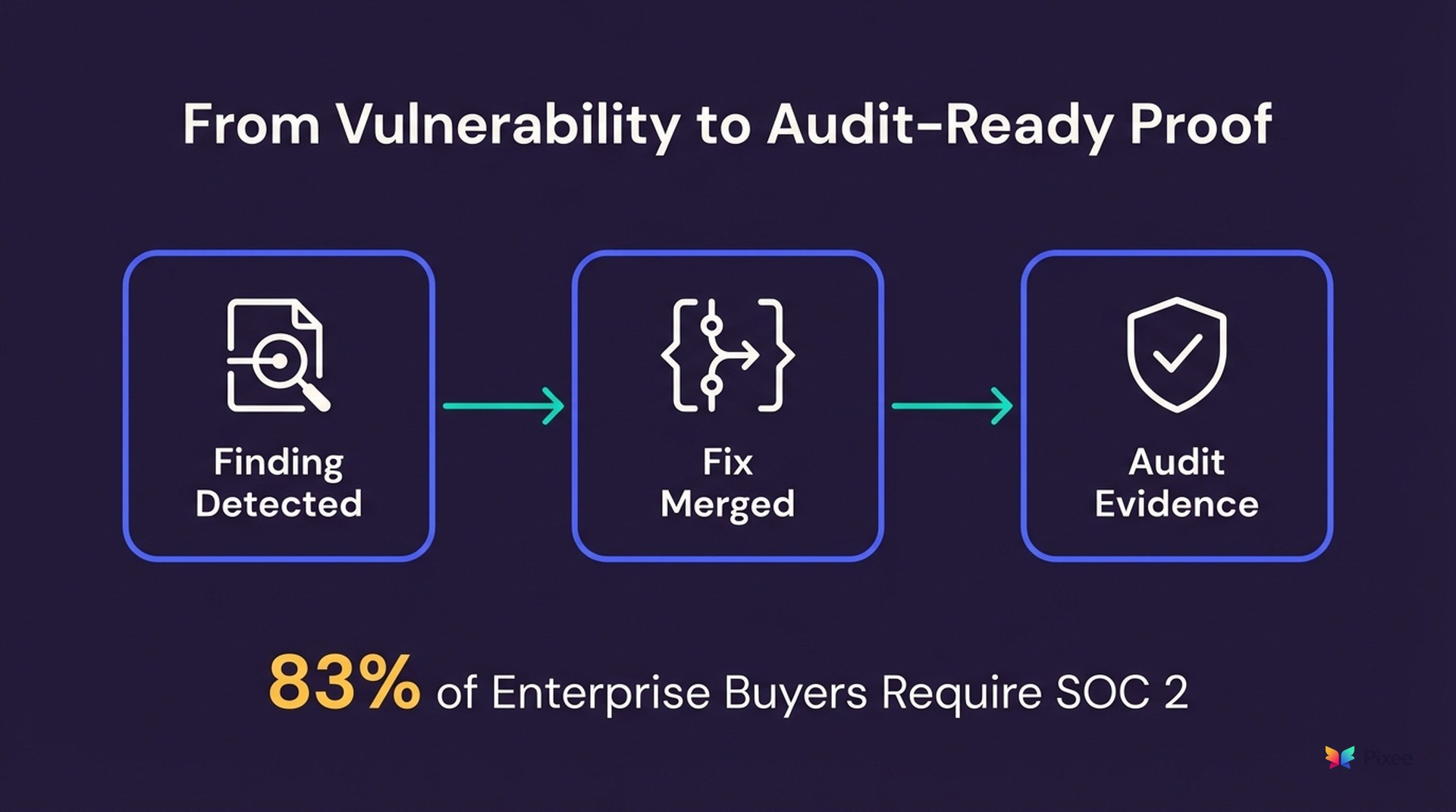

Eighty-three percent of enterprise buyers now require SOC 2 certification from SaaS vendors before signing contracts, climbing to 91% among companies with 5,000+ employees (Vanta, 2025 State of Trust survey). SOC 2 adoption surged 40% in 2024 as companies raced to meet those demands (SOC 2 Certification Directory, 2025). The stakes are concrete: 67% of startups that obtained SOC 2 reported it directly enabled deals they would have otherwise lost, with a median deal size of $120,000.

Getting there is expensive. First-year SOC 2 costs run $30,000 to $150,000 for small and mid-size companies, scaling to $80,000 to $250,000+ for large enterprises when you add audit fees, tooling, consulting, and internal effort (Sprinto; Bright Defense). Engineering teams carry more of that load than most leaders expect: 60 to 80 hours of engineering-specific work on top of the 100 to 200 total internal hours a first audit typically requires (WorkOS; Medium).

SOC 2 Type II audits require evidence that security controls operate effectively over time. You can probably demonstrate that you scan for vulnerabilities. But can you demonstrate that you resolve them consistently, quickly, and with an auditable trail?

Detection is not the gap. Proof of resolution is. Missing documentation is the number-one cause of SOC 2 audit exceptions (Drata, "How to Avoid Audit Exceptions"). Auditors want to see that your team identified vulnerabilities, prioritized them, remediated them, and verified the fix within a reasonable timeframe. A scanner dashboard showing 10,000 open findings does not satisfy that requirement. It documents the opposite.

Automated triage and remediation close this evidence gap by generating auditable artifacts at every step. Triage decisions with rationale, pull requests with security context, merge records with timestamps, and time-to-fix metrics that demonstrate control effectiveness all accumulate without manual effort.

What SOC 2 Type II Actually Requires for Vulnerability Management

SOC 2's Trust Services Criteria address vulnerability management across CC7.1 through CC7.4, each building on the last. Auditors evaluate the entire sequence, not just detection.

CC7.1 — Configuration and Vulnerability Management requires organizations to "identify and manage the security of the system." Auditors interpret this as requiring systematic vulnerability scanning plus evidence that findings are tracked. Most organizations pass here.

CC7.2 — Anomaly Detection and Monitoring captures deviations and irregularities that signal potential control weaknesses (ISMS.online). This is where drift between your stated policies and actual practices becomes visible.

CC7.3 — Evaluation of Security Events explicitly requires that "identified vulnerabilities should be resolved through the development and implementation of remediation activities, which should be documented" (ISMS.online). Note the language: not just resolved, but documented.

CC7.4 — Incident Containment and Remediation requires that "identified vulnerabilities should be remediated through the development and execution of remediation activities" and that organizations periodically review incidents to "identify the need for system changes based on incident patterns and root causes" (AlertLogic).

In practice, auditors evaluate three evidence categories across this chain:

Detection evidence — can you demonstrate that vulnerabilities are identified systematically? Scanner reports, scheduled scan cadences, and coverage metrics satisfy this. Most organizations pass here.

Response evidence — can you demonstrate that identified vulnerabilities are triaged and prioritized? This requires documented triage decisions showing why certain findings were addressed and others deprioritized. Manual triage rarely produces this documentation consistently.

Remediation evidence — can you demonstrate that vulnerabilities were actually fixed within defined SLAs? This requires timestamped fix records tied to specific findings, showing what changed, when, and by whom. Most organizations fail here.

Auditors scrutinize SLA compliance closely. SOC 2 does not prescribe specific fix timeframes; organizations define their own remediation SLAs. Common baselines are critical patches within 48 hours and high-severity within 30 days. CISA recommends 15 calendar days for critical vulnerabilities and 30 for high. Whatever SLA you define, you must demonstrate you consistently meet it (Konfirmity; Blaze InfoSec). Timestamped automated remediation becomes essential evidence here: you set a 7-day critical SLA and can prove, per finding, that you met it.

Picture the audit conversation. "Show me your vulnerability remediation records for Q3." You produce a scanner export showing open findings and a spreadsheet of Jira tickets in various states. The auditor asks, "How do I know these were actually fixed in code?" Silence.

Where Manual Remediation Fails the Audit

Manual remediation creates an evidence gap at three points.

Triage decisions are undocumented. When a security engineer reviews 2,000 findings and decides 50 matter, the reasoning behind dismissing 1,950 lives in their head, not in an auditable system. Auditors cannot verify triage quality without documented rationale.

Fix-to-finding linkage is broken. A developer fixes a SQL injection vulnerability. The fix lives in a pull request. The finding lives in a scanner dashboard. The Jira ticket connecting them was closed with "resolved" and no further context. Six months later, an auditor cannot trace from the finding to the specific code change that addressed it.

Time-to-fix metrics are unreliable. When remediation involves manual triage, manual developer assignment, manual coding, and manual verification, timestamps at each step are approximate at best. The industry-average 252-day MTTR (Veracode State of Software Security, 2025) reflects this reality, and even that number is aggregate, not per-finding.

Compliance Platforms Cover Detection Evidence. Remediation Evidence Is Still Your Problem.

If your team uses Vanta, Drata, or Secureframe, you already have strong tooling for compliance monitoring and evidence collection. These platforms continuously pull data from integrated systems (cloud infrastructure, identity providers, endpoint management) to demonstrate control adherence. Vanta supports 30+ frameworks with 375+ integrations; Drata covers 20+ frameworks with 270+ integrations; Secureframe maps across 25+ frameworks. They are excellent at what they do.

What they do not do is track whether vulnerabilities were actually fixed in code. They monitor whether scans ran. They do not produce the finding-to-fix-to-merge chain that CC7.3 and CC7.4 demand. As one reviewer noted during SOC 2 preparation, "some controls were missing linked automated tests and documents," evidence the platform could not generate because the underlying remediation work was manual and undocumented (Sprinto).

None of these platforms generate pull requests, perform exploitability analysis, or produce triage rationale. They are compliance dashboards, not remediation engines. Multiple sources caution that "using these platforms does not guarantee audit passage... if someone misuses a system or an unknown gap exists, you could still have problems" (Silent Sector).

The positioning is complementary. Compliance platforms handle the monitoring-and-detection evidence layer. Automated triage and remediation fill the gap between "we know about the vulnerability" and "we fixed it with an auditable trail." Together, they close the full evidence chain auditors evaluate under CC7.1 through CC7.4.

How Automated Remediation Creates Audit Evidence

Automated triage and remediation generate structured evidence at every step without additional effort from your security team.

Triage Evidence (Documented Decision Rationale)

When Pixee triages findings through exploitability analysis (95% false positive reduction, measured by comparing scanner-reported findings against exploitability-confirmed findings using reachability and control analysis; Pixee Platform Data, 2025), every decision produces a record:

- Which findings were confirmed exploitable and why (reachability path documented)

- Which findings were deprioritized and why (security controls detected, code path unreachable, dependency function not invoked)

- Confidence scores for each triage decision

This eliminates the "trust the analyst's judgment" gap. Auditors can review triage rationale for any finding.

Remediation Evidence (PR-Level Audit Trail)

Every automated fix generates a pull request containing:

- The specific vulnerability being addressed (linked to scanner finding ID)

- The exact code changes made

- Breaking change analysis with confidence scoring

- Timestamp of fix generation, developer review, and merge

- The developer who approved the merge

This creates a complete chain: finding → triage decision → fix → review → merge → resolved. Every link is timestamped and attributable.

Metrics Evidence (Continuous Control Effectiveness)

Automated remediation produces real-time metrics that demonstrate control effectiveness over the audit period:

- Mean time to remediation by severity, measured in days rather than the 252-day industry average

- Fix rate as percentage of confirmed vulnerabilities resolved within SLA

- Merge rate at 76%, defined as PRs merged without modification within 72 hours of opening, measured across 100,000+ PRs from enterprise deployments (Pixee Platform Data, 2025). This demonstrates production-quality fixes, not cosmetic ones.

- Backlog velocity as net reduction in open findings per period

These metrics satisfy the "operating effectively over time" requirement of SOC 2 Type II without requiring manual collection.

This matters more now than it did two years ago. As Konfirmity notes, "what once satisfied auditors with a point-in-time assessment now demands ongoing evidence of control effectiveness throughout the entire audit period." Most enterprise buyers now ask for assurance artifacts before procurement, and deals routinely stall when teams rely on annual audit reports alone. Automated remediation generates evidence continuously, not in a scramble before the audit window.

What Auditors Actually Want to See

Based on common audit requests, here is what automated remediation evidence looks like in practice:

| Auditor Request | Manual Evidence | Automated Evidence |

|---|---|---|

| "Show me vulnerability remediation for Q3" | Scanner export + Jira spreadsheet | Finding-to-merge chain per vulnerability with timestamps |

| "How do you prioritize which vulnerabilities to fix?" | "Our team reviews severity scores" | Documented exploitability analysis with reachability paths |

| "What is your average time to remediation?" | "We think it's about 30 days" | 4.2 days median, per-finding, automatically calculated (Pixee Platform Data, 2025) |

| "How do you verify fixes are effective?" | "Developers test their changes" | Automated compatibility validation + CI pass records |

| "Show me your remediation SLA compliance" | Manual spreadsheet tracking | Real-time dashboard with SLA breach alerts |

Implementation Path

Week 1: Connect your scanners to Pixee (native integration with Snyk, Checkmarx, Veracode, SonarQube, and 10+ others). Automated triage begins generating documented decisions immediately.

Week 2-4: Automated fixes generate PRs with full audit trail. Developers review and merge. Evidence accumulates automatically.

Ongoing: Export remediation reports for audit periods. Metrics dashboards show continuous control effectiveness. No manual evidence collection required.

Start with your highest-risk repository. Map its current evidence gaps against CC7.1-CC7.4, then evaluate whether automated remediation fills them.

Organizations using compliance automation broadly report a 40 to 60% reduction in audit preparation time and 50 to 70% fewer audit findings on first external audit (Hyperproof, 2025). These figures reflect compliance automation broadly, not Pixee-specific outcomes. Pixee addresses one specific slice: proving that identified vulnerabilities were remediated with auditable, timestamped records.

One company achieved SOC 2 in five months instead of twelve, directly closing $4.2 million in new contracts that required certification, with an $80,000 annual tooling cost (Hyperproof). Organizations using security AI and automation extensively report $1.9 million lower data breach costs and 80 fewer days to identify and contain breaches (IBM Cost of a Data Breach, 2024).

Where This Approach Has Limits

- Pixee generates remediation evidence, not full compliance evidence. Automated remediation produces the finding-to-fix-to-merge chain. You still need Vanta, Drata, Secureframe, or equivalent platforms for detection monitoring, access control evidence, infrastructure compliance, and the rest of your SOC 2 control coverage.

- Automated fixes do not cover every finding type. Architectural issues, business logic flaws, and configuration vulnerabilities that depend on deployment context require human judgment. Automated remediation handles well-understood vulnerability patterns (dependency updates, injection fixes, insecure defaults), not findings that require architectural reasoning.

- Auditor expectations vary. Some auditors will want to see the human review step documented alongside automated fixes. The PR-based workflow includes developer review and merge approval, but organizations should confirm with their audit firm that this satisfies their specific evidence expectations.

- Evidence quality depends on scanner coverage. Automated remediation evidence is only as complete as your scanner coverage. Gaps in scanning create gaps in the evidence chain because the automation cannot fix what the scanners do not find.

- First-audit organizations face a cold-start problem. If you are generating evidence for the first time, automated remediation produces prospective evidence from the point of adoption forward. It does not retroactively create evidence for vulnerabilities that were already fixed manually.

Beyond SOC 2: One Evidence Trail, Multiple Frameworks

Multi-framework companies face an average of 17+ audit requests per quarter (Hyperproof). SOC 2 and ISO 27001 share overlapping controls, yet organizations routinely waste time creating independent evidence sets for each framework. As Hyperproof puts it, "security professionals are often stuck recreating the same evidence across frameworks instead of building defenses or responding to incidents."

Sixty-five percent of organizations say automation is the most effective way to cut compliance complexity and cost (Secureframe). If you are already collecting remediation evidence for SOC 2, the same artifacts map directly to your other frameworks:

- ISO 27001 (A.12.6 Technical Vulnerability Management)

- PCI DSS (Requirement 6: Develop and Maintain Secure Systems)

- NIST CSF (RS.MI: Mitigation activities)

- FedRAMP (continuous monitoring requirements)

One remediation workflow. One evidence trail. Multiple framework coverage.

What Inaction Costs

Companies with SOC 2 Type II close enterprise deals 35% faster than competitors without certification (SOC 2 Certification Directory). Companies managing risk through ad-hoc processes reported a breach rate of 50% in 2025, nearly double the 27% rate among organizations with integrated, automated approaches (Secureframe, citing industry survey data). Only 18% of SaaS companies have secured either SOC 2 or ISO 27001. The gap is wide, and the market is rewarding those who close it.

See Audit-Ready Evidence in Your Environment

Book a demo to see how automated remediation generates SOC 2 evidence from your actual scanners and repositories. We will show the finding-to-merge chain auditors want to see.

Related Reading:

- Pixee: The Triage Automation Playbook

- Pixee: Security Automation ROI Calculator

- Pixee: Clear Your Security Backlog