Java Vulnerability Remediation: From Scanner Alert to Merged Fix

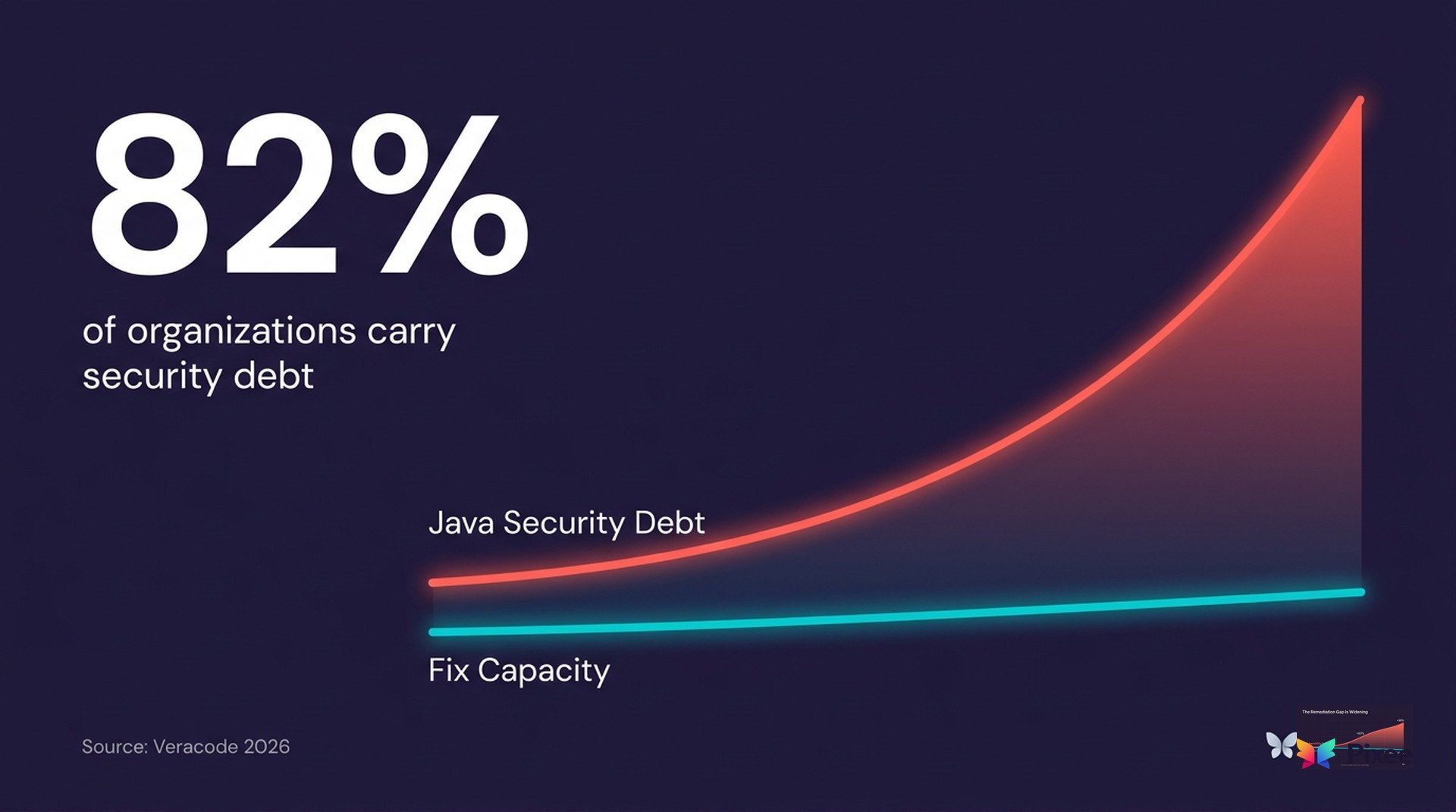

82% of organizations carry security debt they haven't been able to pay down (Veracode State of Software Security 2026).

Java codebases are where that debt compounds fastest. They're the oldest, the largest, and the most likely to contain the same vulnerability pattern repeated across thousands of files in code nobody wants to touch.

In general the median time to remediate a critical vulnerability is 252 days while the time-to-exploit for newly disclosed CVEs has collapsed from 63 days to 5 (Mondoo State of Vulnerabilities 2026). For Java specifically, the gap is acute because Java codebases tend to be large, long-lived, and full of legacy patterns that produce the same CWE findings across thousands of files.

The Top Java CWEs and Why They Repeat

Java's most common vulnerability classes are also its most repetitive. The OWASP Top 10 maps to a handful of CWE patterns that appear in nearly every enterprise Java codebase:

- CWE-89: SQL Injection. String concatenation in JDBC queries. The fix is always parameterized queries via PreparedStatement. But every instance needs its own PR because the query structure differs.

- CWE-611: XML External Entity (XXE). Unrestricted XML parsing in DocumentBuilderFactory, SAXParserFactory, or XMLInputFactory. The fix is disabling external entity resolution, three lines of configuration repeated across every XML parser instantiation.

- CWE-918: Server-Side Request Forgery (SSRF). Unvalidated URLs passed to HttpURLConnection or Apache HttpClient. Requires input validation on URL schemes and hostnames.

- CWE-502: Deserialization of Untrusted Data. ObjectInputStream.readObject() without type filtering. Java's serialization model makes this endemic in legacy codebases.

- CWE-330: Insufficient Randomness. java.util.Random instead of java.security.SecureRandom for security-sensitive operations. A single-line replacement that exists in hundreds of files.

- CWE-295: Improper Certificate Validation. TrustManagers that accept all certificates, disabling TLS verification. Common in development code that reaches production.

Each CWE maps to a known, deterministic fix. The issue is scaling those known fixes 10,000 times.

Manual Remediation: Why It Doesn't Scale

A senior Java developer can fix a SQL injection vulnerability in 15-30 minutes. That's about what it takes to sunderstand the context, write the parameterized query, test it, and create a PR. At that rate, 180 findings take 45-90 developer-hours. That's 1-2 weeks of a developer's time, assuming they do nothing else.

In practice, remediation competes with feature work. Security fixes get deprioritized, backlogs grow, and 81% of organizations ship code they know contains vulnerabilities.

The economics get worse at scale. Organizations with 100K+ vulnerability backlogs can't assign developers to write the same PreparedStatement conversion across thousands of files. With 42% of all committed code now AI-generated (SonarSource State of Code 2026), the problem is going to getworse since AI coding assistants produce Java code with 15-18% more security vulnerabilities than human-written code (Opsera 2026). More code, more vulnerabilities, same team.

Deterministic Codemods: The Automation Layer for Java

Pixee's approach to Java remediation starts with deterministic codemods: rule-based AST transformations that apply known security patterns without LLM involvement. The codemodder-java engine ships 51 core codemods covering the most common Java CWEs.

Each codemod operates on the Java AST (Abstract Syntax Tree), not on raw text. This means fixes are structurally correct. They understand method signatures, import statements, and type hierarchies. A SQL injection codemod doesn't just regex-replace string concatenation; it identifies the JDBC call, constructs the parameterized equivalent, and adjusts the surrounding code to pass parameters correctly.

What this looks like in practice:

Before (CWE-89):

String query = "SELECT * FROM users WHERE id = " + userId;Statement stmt = connection.createStatement();ResultSet rs = stmt.executeQuery(query);

After:

String query = "SELECT * FROM users WHERE id = ?";PreparedStatement stmt = connection.prepareStatement(query);stmt.setString(1, userId);ResultSet rs = stmt.executeQuery();

Codemods handle the structural transformation: replacing Statement with PreparedStatement, converting concatenation to parameter binding, and adjusting the executeQuery call. Every instance of this pattern gets the same fix.

Beyond Deterministic: When AI Steps In

Not every Java vulnerability maps to a deterministic pattern. Complex business logic vulnerabilities, custom authentication flows, or framework-specific issues require contextual understanding that rule-based codemods can't provide.

For these cases, Pixee's MagicMods analyze the surrounding code context (call graphs, data flow, framework conventions) to generate fixes that match your codebase style. Every AI-generated fix passes through a three-layer validation framework: constrained generation, automated evaluation (safety, effectiveness, cleanliness scoring), and CI/CD test verification.

This creates a hybrid architecture. Deterministic codemods handle the high-volume, predictable cases (80%+ of a typical Java backlog), while AI handles the long tail. Both produce PRs that developers review through their normal workflow.

Why Merge Rate Matters More Than Fix Count

Any tool can generate thousands of patches. The question is whether developers actually merge them.

Industry-wide, automated security PRs merge at rates below 20%. Developers reject them because the fixes don't match their code conventions, break tests, or introduce more complexity than they resolve.

Pixee's Java fixes merge at 76% across enterprise codebases. The gap comes from three factors:

How This Compares to Vendor-Native Autofix

If you run Checkmarx, Snyk, or Semgrep, you already have some form of autofix. Here's what differs:

Vendor autofix is locked to one scanner. Checkmarx autofix only works with Checkmarx findings. Snyk Code autofix only works with Snyk findings. If you run both (most enterprises do), you manage two separate fix pipelines with different quality levels and different review workflows.

Vendor autofix skips triage. Snyk and Checkmarx generate fixes for every finding, including false positives. You still need a human to decide which fixes to merge. Scanner-agnostic triage that eliminates 70-95% of false positives before fix generation means developers only review fixes for real issues.

Generic AI fixes lack an evaluation rubric. Copilot and general-purpose LLMs generate fixes from scratch every time with no quality gate. Pixee's Fix Evaluation Agent scores every generated fix on safety, effectiveness, and cleanliness (0.8 threshold) before it becomes a PR. Fixes that fail evaluation get regenerated, not shipped.

Open-source fix rules are auditable. Both codemodder-java and codemodder-python are open-source. You can read every transformation rule before trusting it. Snyk Code and Checkmarx autofix are closed-source black boxes.

Where Automated Java Remediation Doesn't Work

Automated fixes have real limitations:

For these cases, automated triage reduces the noise (95% false positive reduction) so your team spends their limited remediation bandwidth on the issues that genuinely require human judgment.

Getting Started With Java Vulnerability Remediation

If you're sitting on a Java vulnerability backlog, the most productive first step isn't writing fixes. It's triaging what's real. Most enterprise Java codebases have false positive rates between 71% and 88%. Fixing false positives wastes the same developer time as fixing real vulnerabilities.

Start with triage automation to identify which of your 400 findings are actually exploitable. Then apply automated remediation to the confirmed issues. The combination typically reduces a security backlog by 91% — the false positives get eliminated, and the real issues get fixed.

The urgency is real: only 18% of vulnerabilities scored "critical" remain critical after runtime context analysis (Datadog State of DevSecOps 2026). Your team is spending remediation bandwidth on findings that don't matter while the ones that do sit in a backlog that's growing faster than you can burn it down.

For teams evaluating automated Java remediation, the key questions are:

Related Reading

Pixee:

Pixee: