What Looking at 14,000 Security Reviews a Year Taught Me About the Future of AppSec

Adapted from my talk "From Guardrails to Autopilot" at PlanetCyberSec, February 2026.

When we rolled out AI-powered developer tools at AWS in 2023, our builders got dramatically more productive. More features shipped, more code committed, more infrastructure deployed. Great news for the business. Terrible news for the Application Security team.

At the time, I was leading the "platform" application security (appsec) team, responsible for a subset of services within the wider AWS Security organization. AWS AppSec was processing 14,000 security reviews a year.

Then developer velocity jumped.

Not gradually. Not predictably. Suddenly.

And we realized something uncomfortable:

We weren't protecting the engineering organization anymore.

We were pacing it.

When Security Becomes the Clock

On paper our structure looked healthy: Directors, managers, engineers, clearly owned services.

In practice, the entire engineering organization depended on a set of humans reading and analyzing code at exactly the speed humans can read and analyze code.

Every change that crossed a security boundary had to wait for a specific person who understood that service. Over time each engineer became the expert for their slice of infrastructure - which meant progress for that service moved at the speed of one calendar.

Builders and developers couldn't plan around security reviews because reviews weren't schedulable work. They were interrupts competing with other interrupts. A feature might land tomorrow, or next week, depending on whose queue it landed in.

And the more senior an AppSec engineer became, the worse the system got. Experience didn't remove reviews - it assigned more of them. Career growth was expanding breadth, not depth. Career growth meant becoming a larger bottleneck.

This had always been manageable because developer output grew linearly.

Then the Next-Gen Developer Experience (NGDE) AI-powered tools arrived.

We were preparing internal launches ahead of re:Invent 2023 and our builder teams began dogfooding the tooling. Productivity gains were immediate and obvious. Which meant review load scaled instantly.

Nothing broke. We just stopped keeping up.

That's when it became clear:

- Traditional application security wasn't failing because we lacked people.

- It was failing because the model only works when humans are the fastest component in the system. And that stopped being true.

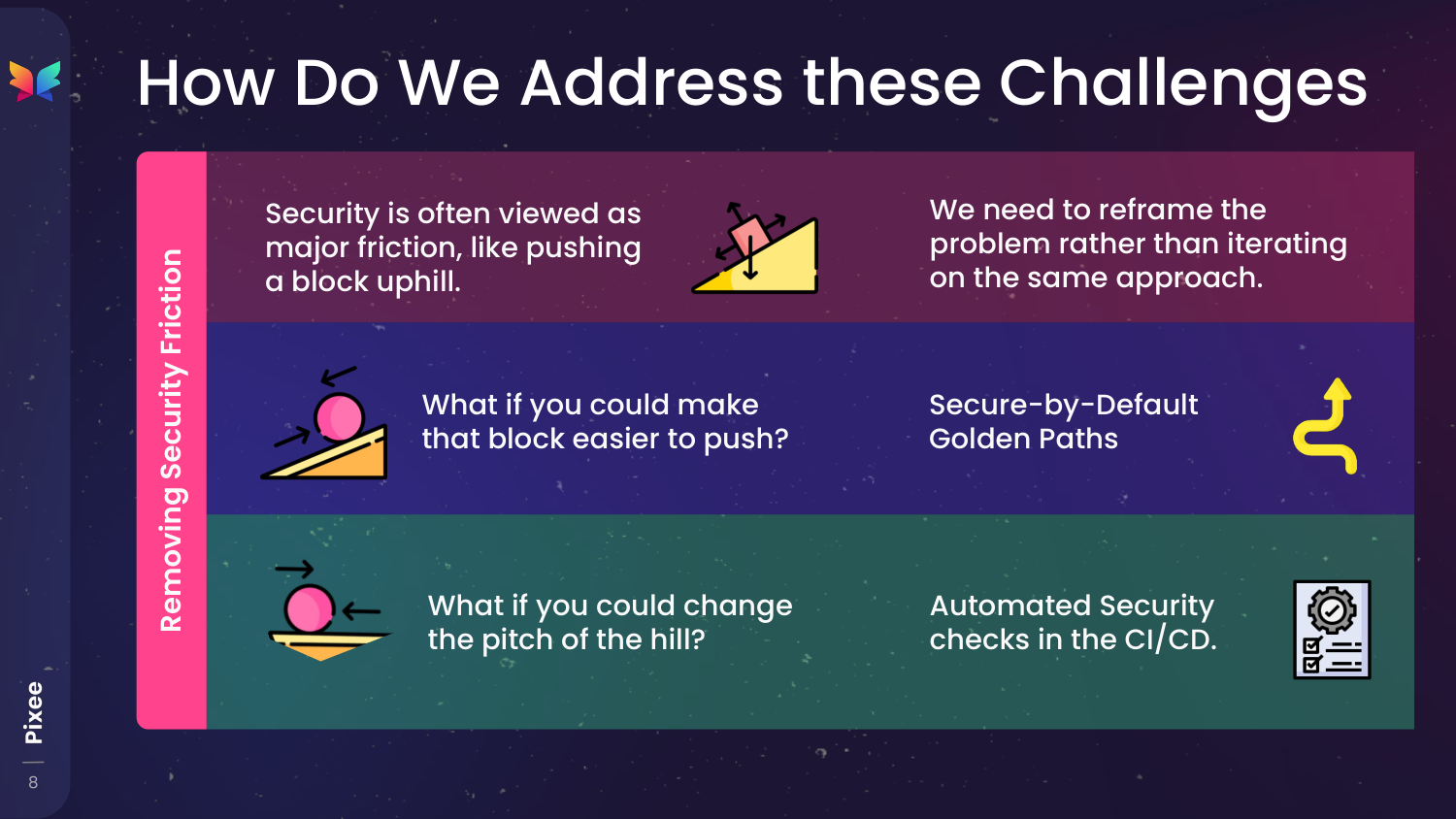

Reframing the Problem

The mistake we were making was subtle. We treated security reviews as work that just needed to happen faster. Like pushing a block uphill, we assumed the solution was to push harder. So we tried the obvious fixes: more reviewers, tighter processes, better triage. None of it mattered. The queue always came back, because the queue wasn't the problem - the existence of the queue was.

We were asking humans to manually validate a system whose output was now machine-scale. At that point the goal stopped being "review more efficiently" and became "we need fewer reviews".

You can think of our AppSec maturity as stages of removing gravity from the process:

- First, make the safe path the easiest path. Reduce how often builders can even create risky changes by providing secure-by-default golden paths.

- Next, make most decisions automatic. Deterministic checks in CI/CD turn reviews into confirmations instead of investigations.

- Finally, remove entire categories of review. GenAI systems understand context and intent and continuously enforce the security bar.

Here's what we learned at each stage.

Golden Paths: Preventing the Problem From Existing

The first realization was that most security findings weren't caused by reckless developers. They came from reasonable decisions made on top of unsafe defaults.

Fix the defaults and entire categories of issues disappear before a reviewer ever sees them.

At AWS, 93% of new applications were built using the Cloud Development Kit (CDK). That gave us leverage: instead of reviewing infrastructure after it was written, we could influence how it was written.

So we changed the platform.

Our team introduced blueprint property injection into CDK - a mechanism to enforce configuration at the framework layer. When a developer created an S3 bucket or an API Gateway, secure settings were applied automatically. No extra code. No ticket. No review for that pattern.

We created golden path environments and pushed adoption across the organization. The pitch was simple:

- Build here and security is automatic.

- Build elsewhere and you enter the review queue.

Entire classes of misconfigurations stopped appearing. Developers chose the golden path because it was faster, and the review queue shrank without adding a single reviewer.

But this only solved configuration risk.

Application logic, authentication flows, data handling, and custom integrations still required human judgment.

So we needed another layer.

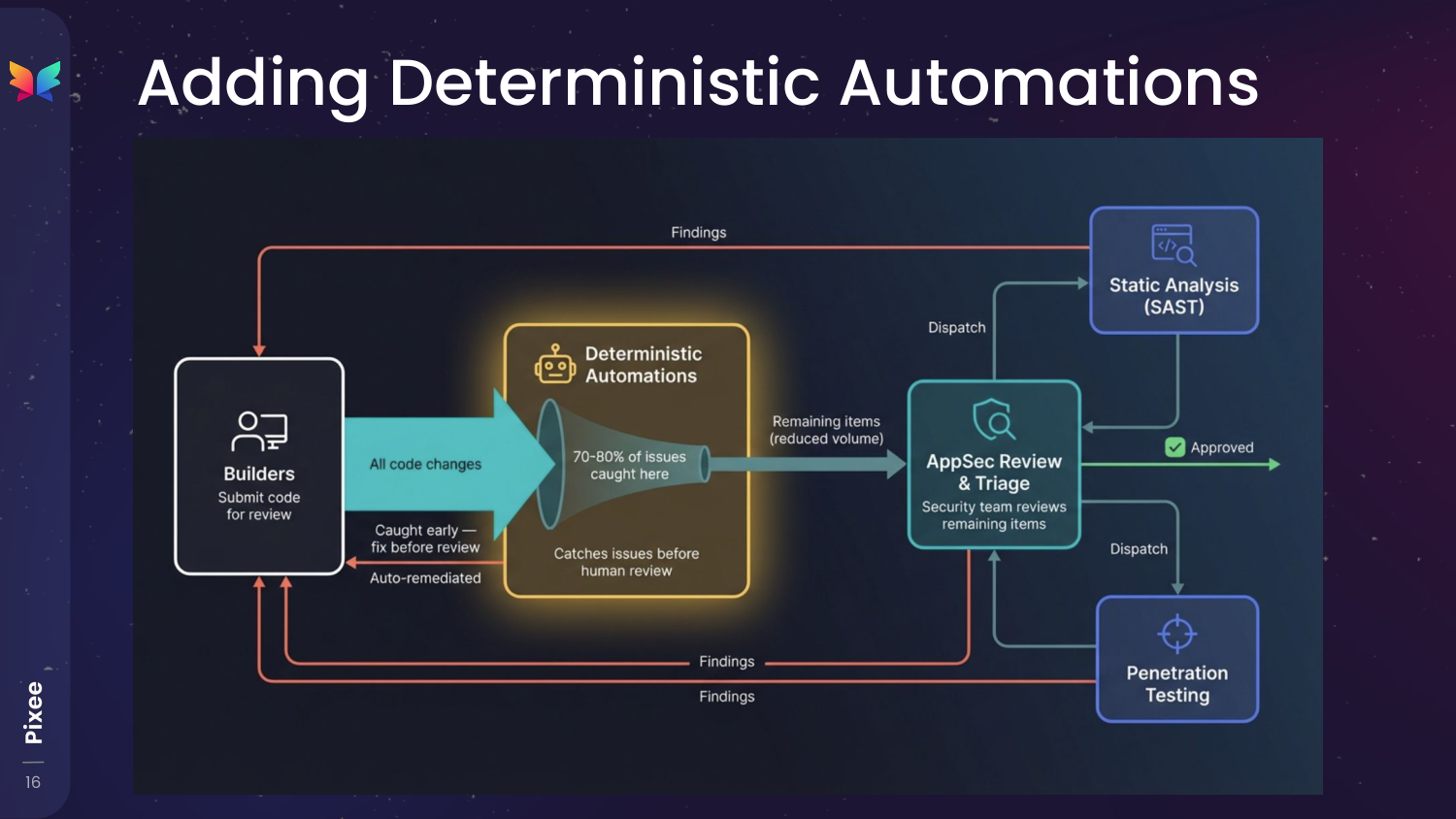

Deterministic Automations: Remove Humans From the Obvious Detections

Golden paths eliminated entire categories of problems, but a large portion of reviews still weren't actually judgment.

They were recognition.

The same patterns appeared repeatedly, yet they were distributed across many reviewers. Individually, each engineer saw noise. Collectively, there was signal - but the organization wasn't structured to see it.

So we changed the intake model.

We routed a portion of all reviews to a single team whose only job was to look for commonality across services. Instead of closing tickets quickly, they slowed down and asked a different question: have we answered this before?

Once patterns emerged, we documented every edge case and reduced the decision to a rule: given context X and property Y, the answer is always A.

That rule became a binary decision tree - a precise description of how a human reviewer verified the finding.

Automation came last.

We first ran the logic inside the browser. Then CLI tooling. Only after it matched human decisions across hundreds of reviews did we promote it into event-driven automation that continuously evaluated changes before AppSec ever saw them.

This caution mattered. A single incorrect automated decision damages trust faster than a slow manual one.

We originally targeted a 25% reduction in review time by year's end. By October, the six-week trailing average had already surpassed 30%, materially reducing how much time AppSec engineers spent in review queues.

The impact extended beyond AppSec. The same checks eliminated downstream pentest effort - about 1,480 pentester days per year. Roughly four engineers' worth of work shifted from repetitive triage to novel problems.

None of this involved AI.

Binary decision trees and simple tooling removed more work than any model we would later deploy. The lesson was unintuitive: before teaching machines to reason about security, first centralize and encode the answers humans already agree on.

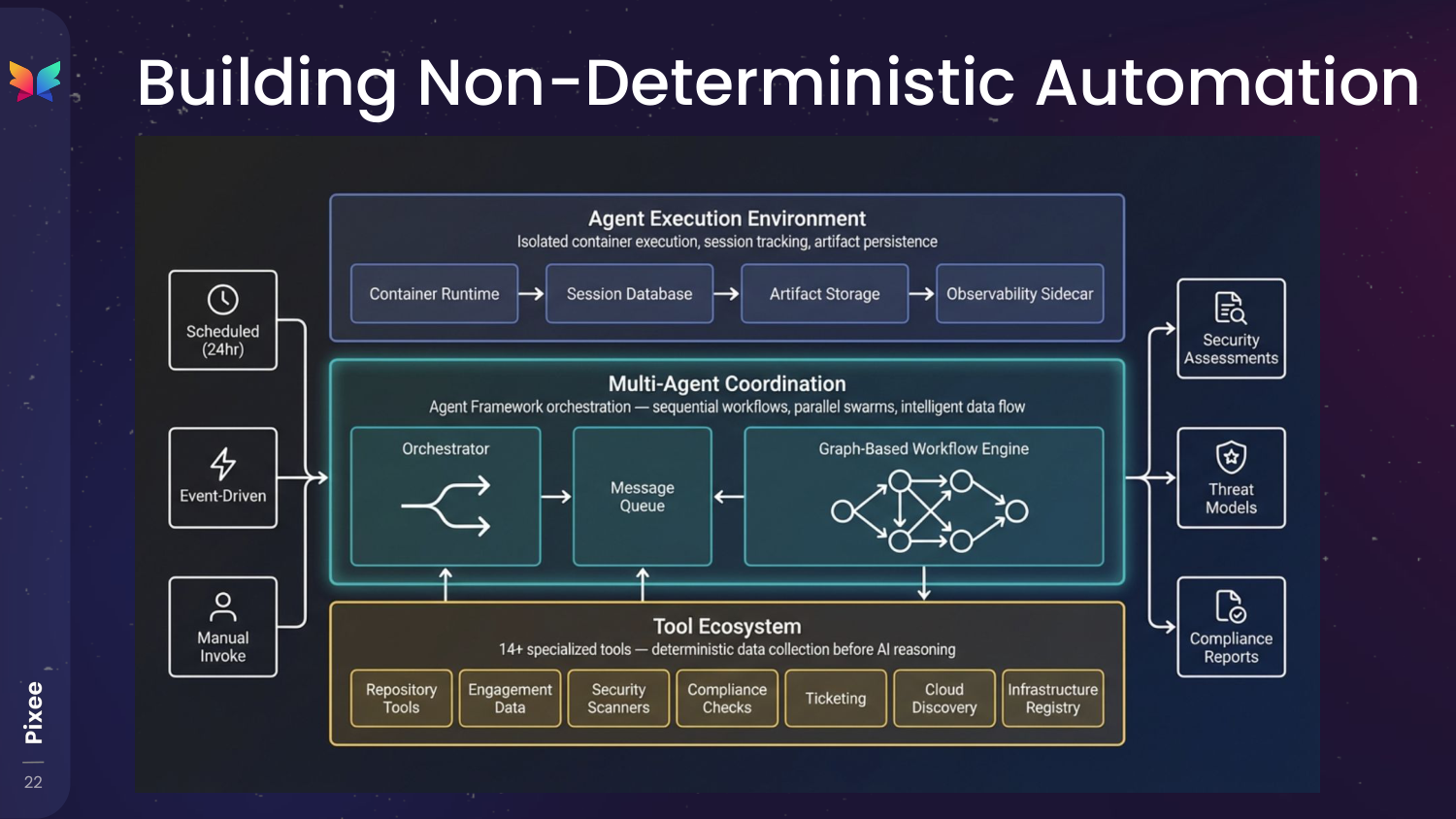

The Agentic Frontier: When Reviews Become Reasoning

After building golden paths and deterministic automation, the remaining work looked different.

Not repetitive. Not pattern-based. Threat models. Architecture tradeoffs. Novel vulnerability chains. Problems where the answer depended on intent and context, not a checklist.

Traditional reviews handle these by restarting an investigation every time. An engineer gathers data, builds a mental model, makes a decision, then the context disappears. So we stopped thinking about AI as a reviewer and started treating it as a persistent investigator.

This agentic security engineer's job wasn't to decide immediately. Its job was to assemble the full security picture before a human ever engaged.

To do that we built a layered investigation pipeline:

- Deterministic collectors gathered facts first - repository state, infrastructure configuration, prior findings, compliance signals, and service metadata.

- A workflow graph then decomposes the analysis into steps. Specialized agents performed tasks in sequence and in parallel, passing structured outputs between each other instead of raw text.

- Isolated execution sessions preserved artifacts and reasoning so analysis could pause and resume without losing context.

The important part wasn't the agents. It was continuity. Instead of a reviewer reconstructing the security needs from scratch every time they engaged, the agentic system constructed the security needs before a reviewer needed to be involved.

With stable evidence, some decisions stopped being judgment calls. The agentic security engineer consumed the same inputs a senior engineer would gather before forming an opinion, and when those inputs were complete, the answer stopped depending on who reviewed it.

At this point, correctness wasn't a human scaling problem anymore - it was an input completeness problem. We weren't automating opinions. We were automating prerequisite understanding.

The Compounding Effect

Golden paths eliminated entire categories of configuration issues. Deterministic automations resolved known answers before human review. Agentic systems handled the reasoning work that used to require senior engineers.

Each layer removed a different kind of effort.

Golden paths reduced how much risk could enter the system. Deterministic automation handled repeatable decisions. Agentic investigation handled the problems that actually required understanding.

The result wasn't fewer security engineers. It was security engineers finally spending their time on novel attack paths, architectural tradeoffs, and strategic program direction - instead of reconstructing the same context over and over again.

No single layer achieves this alone. The leverage comes from stacking them.

A Hard-Learned Practical Lesson

We built this internally because we could.

It took years, a dedicated team, and an environment where investing in long-term security infrastructure made economic sense. At a very large scale, reducing marginal review cost justifies building systems that smaller organizations would never consider funding.

If I were starting the same journey at a typical company, I wouldn't rebuild most of it.

Not because the approach is wrong, but because the approach works only after you solve dozens of uninteresting engineering problems: integrations, context collection, remediation validation, maintenance as scanners evolve, and continuous rule updates. The security insight is the small part. The surrounding platform is the expensive part.

The real decision isn't "automation or not." It's where you want your engineers spending their time: inventing security strategy, or maintaining security infrastructure.

For most organizations, buying the foundation and customizing the triage and fix layer is the rational path. Build only the pieces that differentiate your business. Otherwise you risk recreating a second product organization inside your security team.

Where You Probably Are

Most security programs struggling with review volume aren't missing intelligence. They're missing stability in one of three layers.

- (Layer 0) Prevention Problem - Engineers repeatedly introduce the same categories of issues. Reviews are dominated by education and correction.

- (Layer 1) Known Answers Problem - Reviewers keep answering questions that already have agreed-upon answers. The team isn't doing analysis, it's doing recall.

- (Layer 2) Context Reconstruction Problem - Senior engineers spend most of their time gathering information before they can think. The expertise bottleneck is not judgment - it's investigation.

- (Layer 3) Reasoning Problem - Only now does the work actually require human security intuition: threat modeling, architectural tradeoffs, and novel attack paths.

Organizations often attempt to solve a Layer 0-2 problem with a Layer 3 technology. That's why many automation or AI efforts feel disappointing - they're pointed at the wrong constraint. Progress happens when you remove the lowest layer first. Not because it's glamorous, but because every higher layer depends on it.

Adam Schaal is a Distinguished Engineer at Pixee AI. Previously, he led SHINE (Security Hub Innovation and Experience) at AWS, where he oversaw application security for Amazon's developer platform services. He is a co-founder of Kernelcon and serves on the LocoMocoSec organizing committee. Find him at @clevernyyyy or linkedin.com/in/adamschaal.

Related Analysis

• AppSec maturity model — Detection-to-resolution maturity framework

• what CodeMender means for autonomous security — Industry validation for autonomous remediation

Related Reading

what CodeMender means for autonomous security