Your Critical Findings Quadrupled. Your Security Team Didn't.

AI coding tools have already changed the math on application security. Not in the future. Not as a theoretical risk. Today, in the data your scanners are already producing.

OX Security analyzed 216 million security findings and reported a 4x increase in critical risk year-over-year. Their attribution is direct. AI coding tools accelerate development at a pace security teams were never staffed to review. The tools that generate findings and the tools that generate vulnerable code are both scaling. The people who fix things are not.

That gap is the story of application security in 2026.

The Numbers Behind the 4x

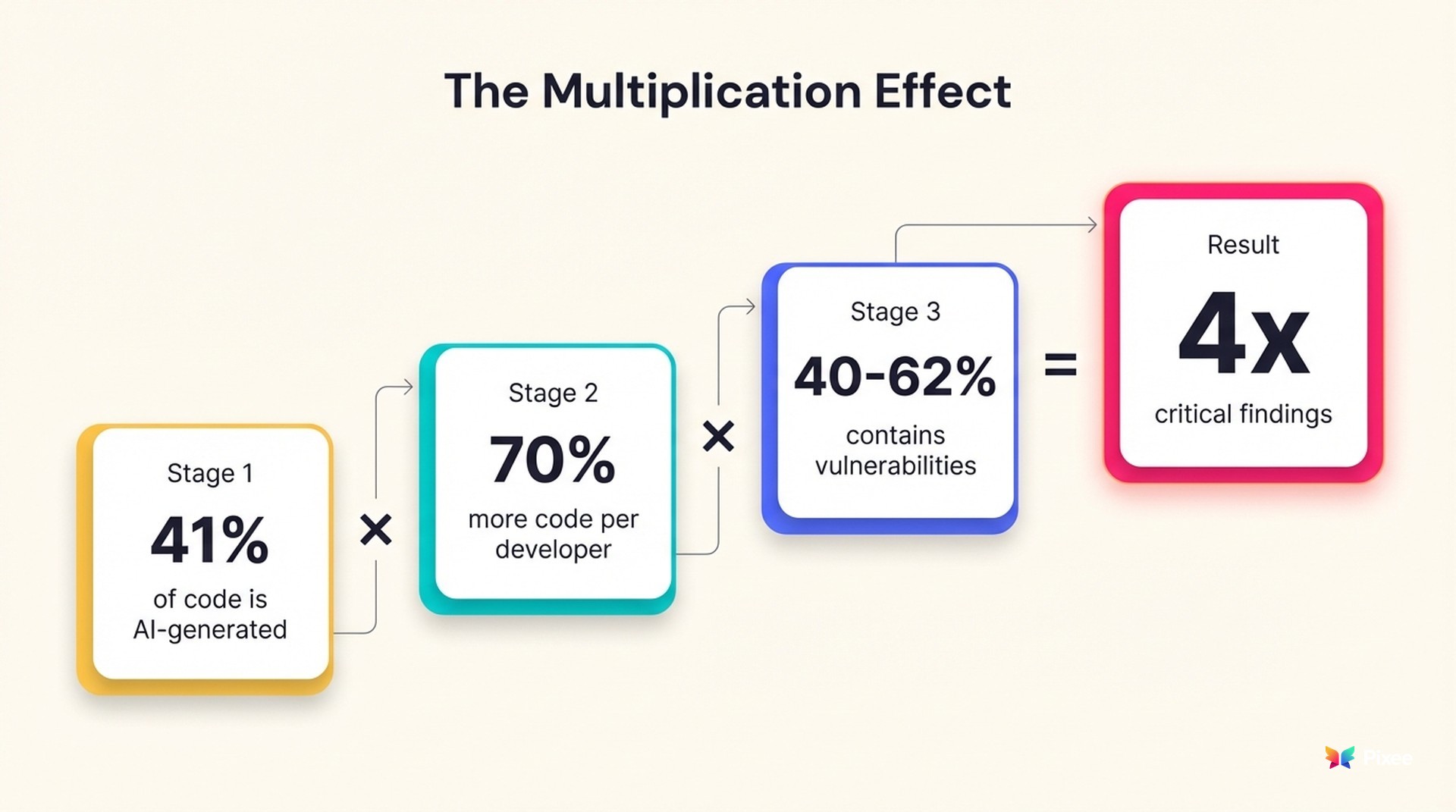

Start with the input side. As of early 2026, 41% of all code is AI-generated. Developers using AI assistants produce 70% more code than they did a year ago. That productivity gain is real and measurable, and no engineering leader is going to give it back.

Now look at what that code contains. Per the same OX analysis, 40-62% of AI-generated code contains security vulnerabilities or design flaws. Developers report spending 45% more time debugging AI-generated code in production, even after it passes QA and staging. The speed advantage on the front end creates a security debt on the back end.

Multiply those two trends together. More code, with a higher defect density, flowing into repositories faster than any human review process was designed to handle. OX Security's 4x critical risk increase is the predictable result. As OX Security CEO Neatsun Ziv put it: "We're not just seeing more alerts. We're seeing materially more real risk year-over-year."

OX Security's research describes this as "insecurity by dumbness." AI coding tools behave like talented but inexperienced junior developers. They lack architectural judgment and security awareness. The issue is not that AI writes worse code line-for-line. It is that vulnerable code reaches production at unprecedented speed, overwhelming traditional code review processes that were designed for human-paced development.

As Brian Krebs observed in his April Patch Tuesday coverage: "more bugs are found now because AI helps discover them faster." He was talking about the discovery side. The generation side is moving just as fast.

Your Attack Surface Expands Before Scanners Even Run

Defect density in committed code is only part of it. GitGuardian's 2026 State of Secrets Sprawl Report found that AI-assisted commits leak secrets at double the baseline rate. Claude Code-assisted commits showed a 3.2% secret leak rate compared to 1.5% for human-only commits. Across public GitHub, 28.6 million new secrets were exposed in 2025 — a 34% year-over-year increase. AI coding assistants like Cursor, Claude Code, and Copilot now execute shell commands and read arbitrary files during development sessions. Secrets get exposed before code reaches a repository. Before any scanner runs. Before any policy triggers.

Your security architecture wasn't designed for this. Most AppSec programs are built around a scanning pipeline that activates when code enters a repository or a CI/CD system. AI coding tools have moved the risk boundary earlier, into environments where you have zero visibility.

Cisco researchers demonstrated a related problem: agentic AI memory systems create persistent attack surfaces that carry across sessions and users. When an AI coding assistant remembers context from previous sessions, that memory becomes a target. Compromise the memory, and you poison every future code suggestion the assistant makes. The attack surface isn't just the code being written. It includes the AI systems helping write it.

This Already Happened

These are not projections. Amazon experienced March outages traced to AI-assisted code changes deployed to production without proper approval. Georgia Tech's Vibe Security Radar project identified at least 35 new CVEs directly linked to AI-generated code in March 2026 alone. Researchers estimate the real number is five to ten times higher across the open-source ecosystem.

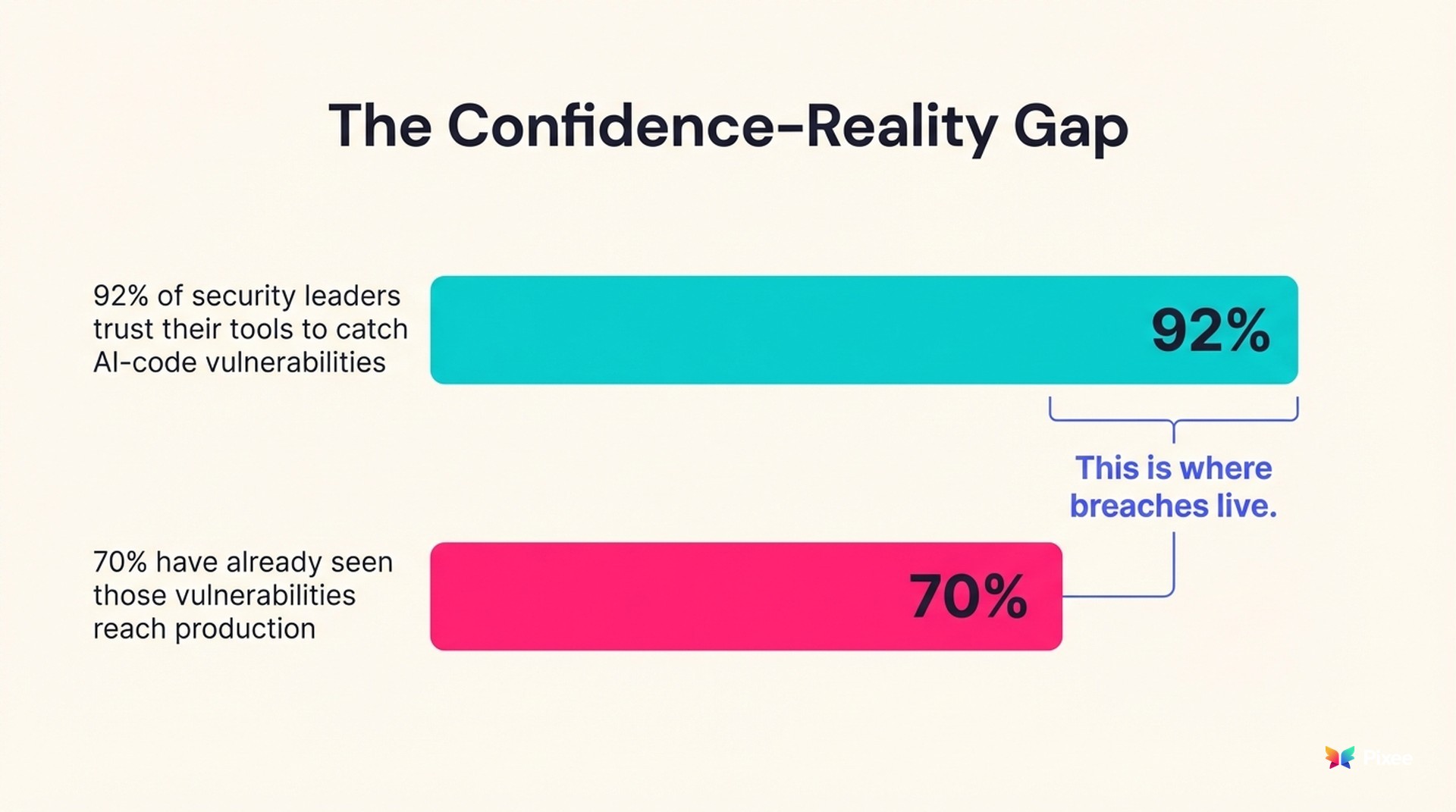

Meanwhile, ArmorCode's 2026 State of AI Risk Management report found a gap between confidence and reality that should concern every security leader. 92% of leaders trust their tools to find AI-code vulnerabilities. 70% have already seen those vulnerabilities reach production. That 22-point gap between confidence and outcome is where breaches live.

The Staffing Math That Doesn't Work

Your developers adopted AI coding tools because the productivity gains are substantial. Your scanners now produce 4x more critical findings because those tools generate code with a higher vulnerability rate. Your security team headcount did not quadruple. In most cases, it didn't change at all.

Industry response to rising finding volumes has historically been "add more scanning." More SAST rules. More SCA checks. More container scanning. Each new scanner adds its own false positive rate on top of the existing pile.

Current false positive rates sit at 60-70% across the industry, with some tools hitting 71-88% depending on configuration. If you haven't already built a framework for triaging those false positives, each new scanner makes the backlog worse, not better.

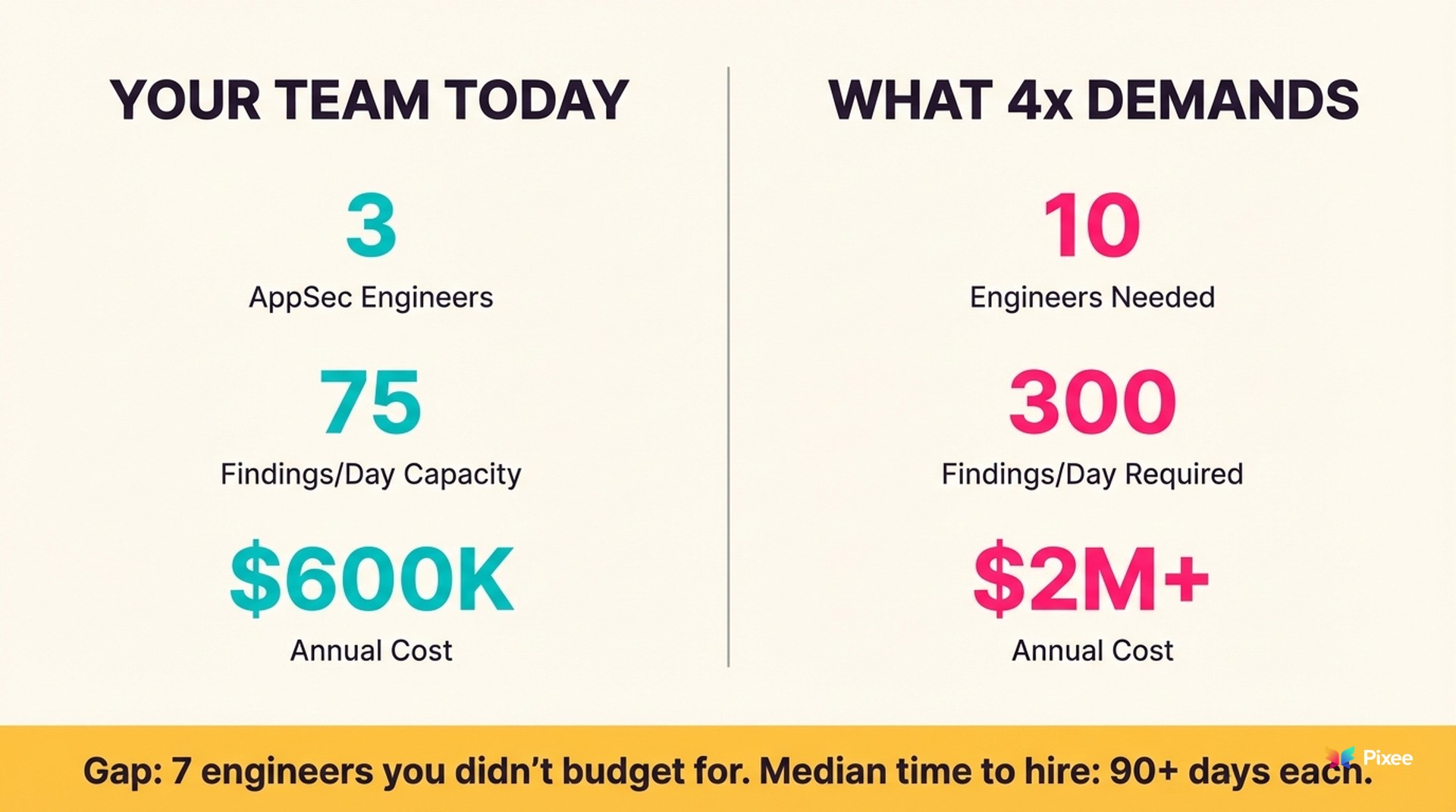

A security engineer reviewing findings manually can process 20-30 per day with adequate investigation. Do the math on your own team. If you have three AppSec engineers, they can process roughly 75 critical findings per day. At 4x volume, you need to process 300. That is a 10-person team at $200K+ per engineer. That is $2M+ in annual headcount you did not budget for.

Hiring won't close that gap. The talent market for AppSec engineers hasn't grown to match demand. Median time to fill an AppSec role already exceeds 90 days. Even with unlimited budget, the people do not exist in sufficient numbers. You are not going to quadruple your security team. So the volume has to be handled differently.

A Two-Front War Nobody Is Talking About

OX Security's 4x increase is year-over-year and pre-Mythos (Anthropic's advanced vulnerability-discovery model). That data reflects AI coding tools generating vulnerable code at a rate that outpaces human review. It does not yet include the acceleration coming from the other direction.

When AI models get better at discovering vulnerabilities (and they already are), the finding volume increases from both sides simultaneously. More vulnerabilities being created by AI-assisted development. More vulnerabilities being discovered by AI-assisted scanning. The team in the middle, responsible for triaging and fixing, absorbs pressure from both directions.

Early data suggests the remediation side is already losing. Less than 1% of vulnerabilities discovered through advanced AI scanning have been patched so far. Discovery is scaling. Remediation is not.

Mean time to remediate already sits at 252 days. Exploit windows are measured in hours. The Cloud Security Alliance's MythosReady paper, authored by 60+ CISOs from Google, Netflix, Cloudflare, and Wells Fargo, acknowledged the core dynamic bluntly: "We cannot outwork machine-speed threats."

That was about AI-accelerated offense. The same logic applies to AI-accelerated code generation. You cannot outwork machine-speed code production with human-speed security review.

What the 4x Actually Means for Your Program

If you run an AppSec program, the 4x finding increase changes your planning assumptions in three concrete ways.

Your backlog projections are wrong. Any remediation plan built on last year's finding volume is already obsolete. If your team resolved 1,000 critical findings last year and this year produces 4,000, your backlog doesn't just grow. It compounds, because the oldest unfixed findings accumulate exploit exposure time while new ones arrive.

Your false positive ratio matters more than ever. At 1x volume, a 60% false positive rate means your engineers waste time but can still process the real findings. At 4x volume, a 60% false positive rate means your team spends the majority of its time investigating noise while real vulnerabilities age past their exploit windows. The ratio didn't change. The absolute volume of wasted time did.

Tool evaluation criteria need updating. When finding volume was manageable, the primary question for any security tool was "does it find things?" That question is now insufficient. The right question is "does it help me process findings faster than they arrive?" A tool that adds to your finding volume without reducing your triage burden is making the problem worse, regardless of its detection accuracy.

Structural Responses, Not Incremental Ones

Teams navigating the 4x increase share a common trait: they stopped treating finding volume as a staffing problem and started treating it as an engineering problem. That plays out differently depending on the organization, but the successful responses fall into a few categories.

Triage automation to separate signal from noise. If 60-70% of your findings are false positives, the highest-leverage move is eliminating noise before a human touches it. Exploitability analysis, reachability checks, and context-aware prioritization can cut triage burden dramatically. This is not optional at 4x volume — it is the difference between your team spending their time on real vulnerabilities versus drowning in alerts.

Remediation automation for the categories that do not require human judgment. Dependency upgrades, hardcoding fixes, configuration corrections — these represent a large percentage of findings and follow predictable patterns. Automating them frees your engineers for the architectural and business-logic vulnerabilities that actually need human expertise.

Developer security guardrails at the point of code generation. If AI coding tools are the input source for the 4x increase, the most direct intervention is at the generation layer. Secret scanning hooks in AI coding sessions, prompt-level security constraints, and pre-commit validation all address the problem before it becomes a finding.

Risk-based prioritization tied to your actual exposure. Not all 4x findings carry equal risk. Connecting finding severity to runtime reachability, internet exposure, and business criticality lets your team focus on the 5-10% of findings that represent genuine exploitation risk rather than treating every critical as equal.

None of this replaces security engineers. It makes sure they spend their time on work that actually requires their expertise, instead of drowning in a backlog that grows faster than any hiring plan can address.

The 4x increase is here. It preceded the AI-accelerated discovery wave that the industry is bracing for. If your AppSec program is still sized for last year's finding volume, the gap between what your scanners produce and what your team can process is already wider than you think.

The data already told you. The question is what you do next.

This analysis expands on findings from AppSec Weekly, April 15, 2026. For coverage of the Mythos response plans referenced above, see AppSec Weekly, April 8, 2026.