Platform Engineering Security: From Gates That Block to Guardrails That Ship

The average internal developer platform has 10% adoption.

That number comes from Humanitec's State of Platform Engineering Vol. 4, and it should bother every VP of Engineering who signed off on a platform team in the last two years. Gartner predicted 80% of large software engineering organizations would have platform teams by 2026. CNCF's Q1 2026 survey found 28% of developers already work for organizations with dedicated platform teams, and 88% of backend developers work in standardized DevOps environments.

The teams exist. The platforms got built. Developers aren't using them.

The usual explanation is that IDPs abstracted the wrong thing. They made Kubernetes easier or standardized CI pipelines, and developers said "fine, but that wasn't my biggest problem." The biggest source of unwanted complexity in modern development isn't infrastructure provisioning. It's security. And most platforms don't touch it.

Platform engineering security isn't a feature you add to the roadmap for Q3. It's the reason developers would actually adopt the platform in the first place.

Platform Teams Already Own the Control Points

Here's what's odd. Platform teams already control the surfaces where security matters most. They own the CI/CD pipeline. They define the deployment templates. They maintain the service scaffolding. They manage dependency policies. These are exactly the points where security controls are most effective, and most platform teams haven't connected the two.

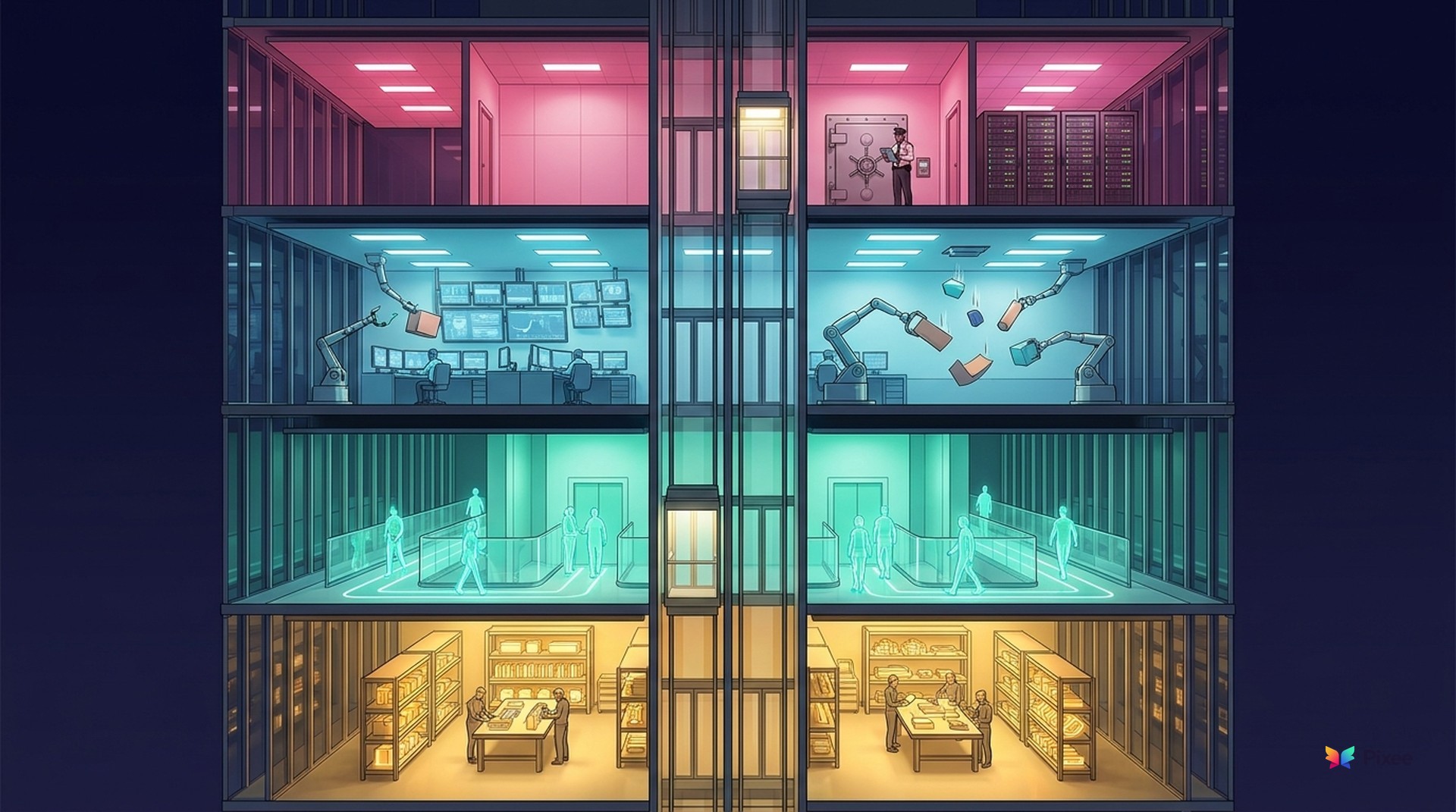

Google Cloud published a taxonomy of four platform engineering control mechanisms in August 2025 that makes this concrete. The taxonomy defines golden paths, guardrails, safety nets, and manual checkpoints as the four distinct ways a platform team can shape engineering behavior. It works because it separates what most organizations lump together under "security policy" into four mechanisms with different failure modes and appropriate uses.

Netflix figured this out early. Their "paved roads" model provides secure-by-default central platforms so engineers can focus on business value. More than 90% of Netflix engineers stay on the road because the paved road is easier than the alternative, not because someone mandated it. Backstage, the CNCF-graduated developer portal, now has 3,400+ adopters including Airbnb, Booking.com, and Philips building IDPs on the same premise.

But 56% of platform teams have existed for less than two years, according to Humanitec. Most are still working on the basics. And 29.6% don't measure success at all. But embedding security into the platform isn't a separate workstream. It's choosing security-native defaults for infrastructure you're already building.

As one VP of Engineering at a mid-market security customer put it to us: "The most valuable resource we have is attention." The platform either reduces the cognitive load of security decisions or it adds to it. Mature platform teams report 40-50% reductions in cognitive load for developers. That doesn't happen by accident. It happens when the platform absorbs security complexity instead of routing it to individual engineers.

Shift-Left Failed Because It Shifted Blame, Not Capability

The application security industry spent two decades telling developers to "shift left." We invested billions in developer training, IDE plugins, and security education. The results are in.

68% of developers cannot confidently remediate OWASP Top 10 vulnerabilities without external guidance, according to the Cloud Security Alliance. 86% of developers do not view application security as a top priority. 55% of companies cannot quantify the impact of their shift-left efforts at all, per Forrester.

This isn't a failure of effort. It's a failure of model. Shift-left assumed developers would become part-time security practitioners. They didn't. Not because they're lazy or don't care, but because they have a full-time job that isn't security.

The downstream effects are predictable. Atlassian's State of Developer Experience 2025 found 63% of developers say leaders don't understand their pain points. Cycode's 2025 State of ASPM Report found 65% of teams bypass security tools, dismiss findings, or delay fixes due to noise and alert fatigue. And 95-98% of AppSec alerts do not require action.

We see this in customer conversations. A Head of AppSec at a large networking equipment company described the developer friction directly: "There's been an uphill battle with that because, obviously, clearly, in the beginning, this is behavioral change for most developers. They gotta stop in their tracks now. Fix the vulnerability."

A VP of Engineering at a security services company was blunter: "Developers voted with their feet a long time ago. You can go to the marketplace and for all these IDEs, developers don't install security tools."

At one large automotive retailer, a DevSecOps team of 14 supports 500 developers. Their assessment of how developers handle security findings: "Generally, it gets ignored."

More training won't fix this. Neither will more scanners. It's infrastructure that absorbs security decisions so developers don't have to make them. That's what a well-built IDP does.

Four Ways to Embed Security in Your Internal Developer Platform

Google Cloud's taxonomy provides four distinct mechanisms, each with a different role. Understanding which one to apply where is the difference between security that developers accept and security that developers route around.

1. Golden Paths: Secure Defaults

A golden path is a pre-built template or scaffolding with security baked in. Service templates that include input validation, authentication middleware, structured logging, and pinned dependencies by default. When a developer scaffolds a new microservice and it ships with OWASP-compliant defaults, that's a golden path.

Use golden paths for new service creation, infrastructure provisioning, and CI/CD pipeline configuration. What matters is that the secure option is the easy option. Netflix's paved roads work because going off-road requires more effort, not less.

The most mature organizations are extending golden paths upstream of code. They review architectural decisions and design documents for security implications before implementation begins. Fixes at the design stage cost 10-100x less than fixes discovered after code is built. This is the highest-leverage golden path: catch the structural decision that creates a class of vulnerabilities, not the individual instances after they ship.

2. Guardrails: Automated Policy Enforcement

A guardrail is an automated check that redirects toward secure paths without blocking deployment. A CI pipeline that detects a vulnerable base image, auto-substitutes a patched version, and opens a pull request explaining the change. The developer isn't blocked. The default action is safe. The exception path is documented.

Use guardrails for dependency management, container configurations, secret detection, and infrastructure-as-code validation. DORA research shows high-performing teams spend 50% less time remediating security issues. The mechanism is guardrails, not heroic individual effort.

Guardrails also cover implementation drift. As code is written, the gap between what was designed and what actually shipped widens. Authentication flows get implemented differently than the approved specification. Data handling diverges from the patterns the security team reviewed. Mature guardrails detect this drift early, before it compounds into architectural security debt.

Most guardrail implementations fail at the same point: they detect a finding and route it to a developer as a notification. That's not a guardrail. That's a gate with a softer name. The developer still stops, assesses whether the finding is real, figures out the fix, and validates it won't break anything. You've automated the alert, not the work. A guardrail that actually works does two things: it determines whether the finding is exploitable in the code's actual execution context (triage), and if it is, generates a fix that matches the codebase's patterns and conventions (remediation). The developer reviews a proposed change, not a problem to solve. That's the difference between 95% of findings ignored and merge rates above 70%.

Here's what that looks like on a Tuesday morning. A scanner flags a vulnerable dependency in your service. Without a platform guardrail: the alert lands in a dashboard, a developer eventually triages it, spends 20 minutes confirming the library is actually reachable in their code path, writes the version bump, runs the test suite, opens a PR, and waits for review. Two to four hours, if it happens at all. With a working guardrail: the platform evaluates reachability automatically, determines the dependency is exploitable in this service's context, generates a fix PR that pins to the patched version with passing tests, and explains the reasoning in the PR description. The developer reviews and merges in minutes. Same finding. Radically different experience.

3. Safety Nets: Runtime Detection and Auto-Remediation

A safety net catches and corrects security issues that slip through golden paths and guardrails. Runtime vulnerability detection that triggers automated pull requests when a production dependency gets a CVE. Emergency patching workflows that don't require a developer to context-switch from their current sprint.

Use safety nets for production monitoring, dependency vulnerability response, and zero-day response. Veracode's 2026 State of Software Security reports the industry median MTTR at 252 days, up 47% since 2020. Safety nets exist because 252 days is not a defensible response time.

4. Manual Checkpoints: The Gates You Keep

A manual checkpoint is human review reserved for changes that genuinely need judgment. Cryptographic library replacements. Authentication flow redesigns. Third-party integrations that handle PII. Anything where the blast radius of a wrong decision justifies the cost of a human in the loop.

Use manual checkpoints for regulatory requirements (PCI-DSS, HIPAA), novel architectures, and high-blast-radius changes. Here's the honest part: guardrails don't eliminate all gates. They reduce them to the 5-10% of changes that actually need a human. When 90% of security interactions are automated, the remaining 10% get the attention they deserve.

AI-Generated Code Makes Platform Security Non-Negotiable

Everything above would be important if code velocity stayed constant. It didn't.

Gartner projects at least 30% of application vulnerabilities will be caused by "vibe coding" practices by 2027. An analysis of 470 GitHub pull requests by Legit Security found AI-written code produces security flaws at 2.74x the rate of human-written code. Aikido's 2026 survey of 450 organizations found 69% have already discovered vulnerabilities introduced by AI-generated code.

Volume numbers tell the rest of the story. Ox Security's 2026 benchmark tracked 250 organizations and found critical findings per organization nearly quadrupled year-over-year, from 202 to 795. The average organization now manages 865,398 raw security alerts.

Meanwhile, the offense side of the equation shifted. Anthropic's Mythos Preview red-team paper demonstrated autonomous zero-day discovery for pennies per attempt. DARPA's AIxCC competition showed AI finding and patching vulnerabilities across 54 million lines of code at a fraction of what a human researcher would cost. The cost of discovering a vulnerability collapsed by two orders of magnitude in two years. The cost of defending against one didn't.

Anton Chuvakin named this the Patch Sound Barrier. Every organization has a maximum remediation velocity. AI-accelerated discovery permanently exceeds that ceiling for most organizations. More scanning doesn't help. At 71-88% false positive rates, every new scan layer adds triage burden faster than it adds resolved findings.

Platforms raise the ceiling. Automated guardrails, golden paths, and safety nets absorb volume at the infrastructure layer. Manual triage of individual findings cannot scale to cover AI-generated code velocity. This is the structural argument for platform engineering security, and it isn't going away.

Five Metrics That Prove Your IDP Security Is Working

Humanitec found 29.6% of platform teams don't measure success at all. If you're going to embed security into your IDP, here's how you know it's working.

1. Developer adoption rate. Target: above 60%. If the platform adoption is still at the industry ~10% average, the guardrails aren't protecting anything. Adoption is the prerequisite for everything else.

2. Security PR merge rate. Target: above 50%. If your platform sends automated security fix PRs and developers close them unreviewed, your automation is generating noise, not fixes. A merge rate above 50% means developers trust the platform's output enough to accept it. Organizations that pair automated triage with context-aware remediation consistently see rates above 70%. The difference is whether the PR explains why this specific finding matters in this specific codebase, not just that a CVE exists.

3. Mean time to remediate. Target: days, not months. The industry median is 252 days. A platform with working safety nets and guardrails should collapse the feedback loop between finding and fix to single-digit days for automated categories.

4. False positive rate of alerts reaching developers. Target: fewer than 20% of alerts require human action. The industry baseline is 95-98% noise. If your platform is routing unfiltered scanner output to developers, you've built a gate, not a guardrail.

5. Security-related deployment blocks. Target: fewer than 5% of deployments blocked by security controls. Higher than that means you have gates masquerading as guardrails. Track this weekly and treat increases as a platform bug, not a security success.

Start Here: Four Phases to Security-First Platform Engineering

Phase 1 (Week 1-2): Audit. Map every security touchpoint in your deployment pipeline. Count how many are gates (human approval required) versus guardrails (automated with override path). Ask your developers which security gate they complain about most. That's where you start.

Phase 2 (Month 1-3): Golden Paths. Build one secure service template that 80% of new services can use. Include authentication, input validation, structured logging, and dependency pinning. Don't try to cover every case. Ship the 80% path and measure adoption weekly. If adoption isn't climbing, the template is too restrictive or too different from how your teams actually work.

Phase 3 (Month 3-6): Guardrails. Automate the gate you identified in Phase 1. The pattern: automated default action, PR-based notification with a clear explanation, and a logged exception path for the cases that genuinely need human judgment. Measure merge rate to validate developer trust. The pattern works best when the platform handles both triage (filtering to findings that are actually exploitable in context) and remediation (generating fix PRs that match codebase conventions). Pixee automates both layers.

Phase 4 (Month 6+): Design-Phase Security. The frontier. Extend security left of code itself: review product requirements, design documents, and architectural proposals for security implications before anyone writes a line of code. Monitor implementation drift from approved patterns during development. The industry is moving here. Pixee is exploring this with design partners: agentic review of architectural decisions before code exists, catching the structural choices that create vulnerability classes downstream.

Where This Leads

The 10% IDP adoption number is a trailing indicator of a design choice. Platforms that abstract infrastructure solve a problem most developers learned to live with years ago. Platforms that absorb security complexity solve the problem developers are drowning in right now.

Platform engineering security isn't a security initiative. It's a developer experience initiative that happens to make the CISO's life better too.

Related reading: