The Resolution Platform

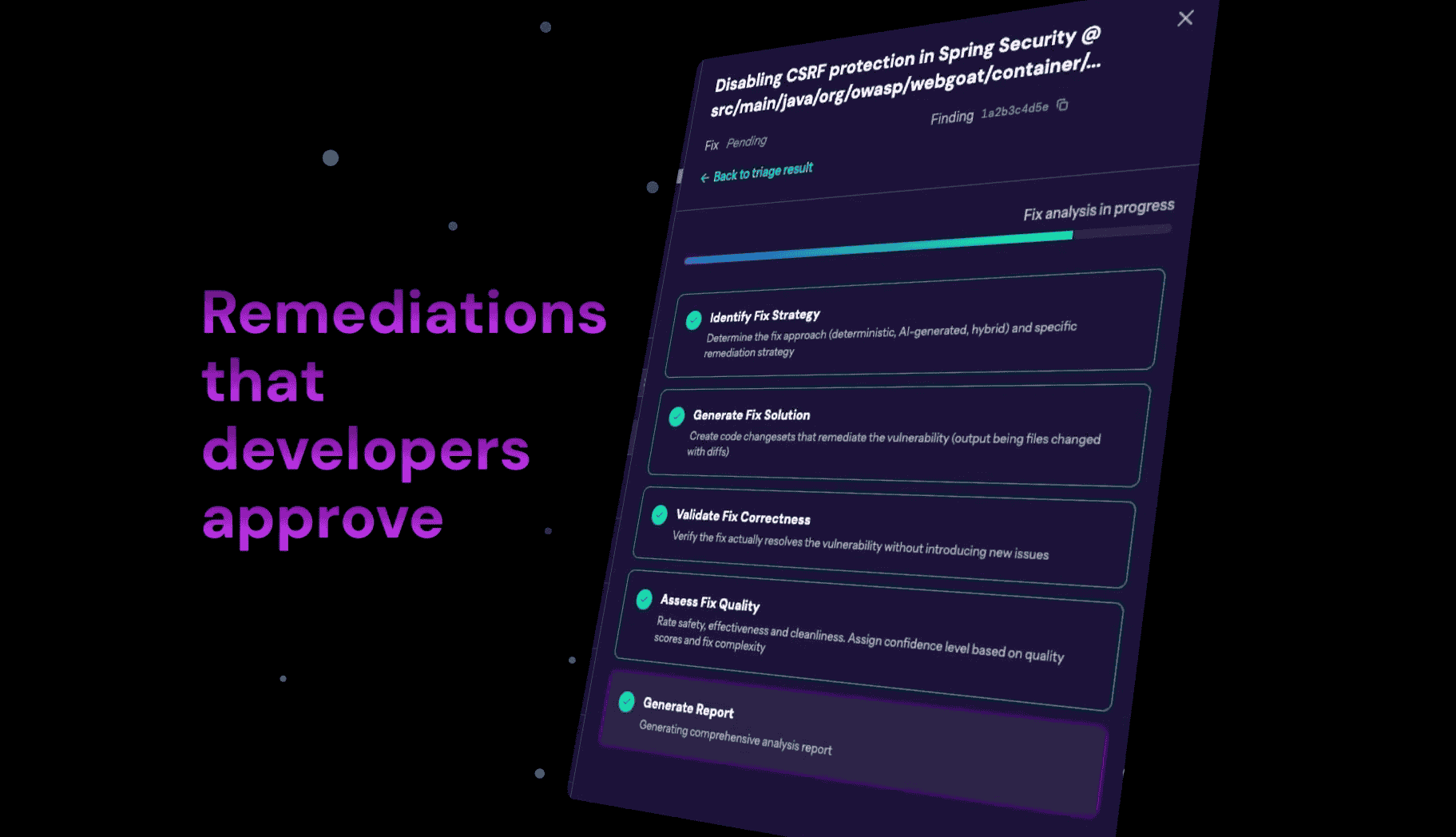

Your scanners find vulnerabilities. Pixee triages them and resolves what matters. We ingest findings from any scanner, triage, prioritize and fix what is truly exploitable.

Capabilities & Coverage

Enterprise Grade

Built to handle the complexity of modern software development. Pixee unifies findings from your entire security stack and provides the intelligent capabilities to actually fix them.

Works with your stack

Pixee connects with the tools you already use. From code repos to scanners and CI/CD, we orchestrate your entire remediation workflow.

For AppSec Teams

Eliminate 74% of manual triage time. Handle tens of thousands of repositories with current headcount.

For Developers

Reduce security work from 6 hours to 5 minutes. 76% merge rate proves fixes are production quality.

For CISOs

Mean time to remediation drops to 2 days. Meet SEC and EU CRA compliance requirements.

From Systems of Detection

To Systems of Decision

A parallel reality where every security decision leaves a trace.

Not just a snapshot. A history.

The 4 Layers of Context

How Pixee builds your organization's security memory

Process Context

Security policies, architectural patterns, governance rules.

Raw Context

Code, scanner findings, dependencies, configurations.

Kinetic Context

Exploit verification, reachability analysis, cross-scanner correlation.

Human Feedback Context

Merge/reject patterns, organizational preferences, precedents.

Choose your environment

Whether you need speed or sovereignty, Pixee runs where your code lives.